The enterprise embrace of Generative AI is accelerating at an unprecedented rate. From developers auto-completing code to marketing teams drafting campaign copy, the productivity gains are undeniable. However, this rapid adoption introduces a critical, often unmonitored, channel for risk. How can an organization be certain that proprietary source code, sensitive customer PII, or unannounced financial data isn’t being shared with public Large Language Models (LLMs)? Without dedicated visibility, they can’t. This is the core challenge that AI observability is built to solve.

True AI observability provides a comprehensive view into the black box of enterprise GenAI usage. It’s about more than just knowing that employees are using these tools; it’s about understanding how they are using them. This requires a solution capable of real-time monitoring of the prompts users submit, the responses they receive, and the data that flows between them. By establishing this foundational visibility, security teams can finally implement the governance needed to foster innovation securely. This article explores how modern observability tooling, delivered at the browser or API layer, enables organizations to track GenAI usage, implement AI-powered risk detection, and conduct thorough behavior auditing.

The New Frontier of Risk: The GenAI Blind Spot

Traditional security architectures, including network firewalls, CASBs, and endpoint protection, were not designed for the nuances of GenAI interactions. These tools often lack the context to differentiate between a benign research query and the exfiltration of a sensitive corporate document. The communication happens over encrypted web traffic to legitimate domains, rendering many existing controls ineffective. This creates a significant blind spot where high-risk activities can occur completely undetected.

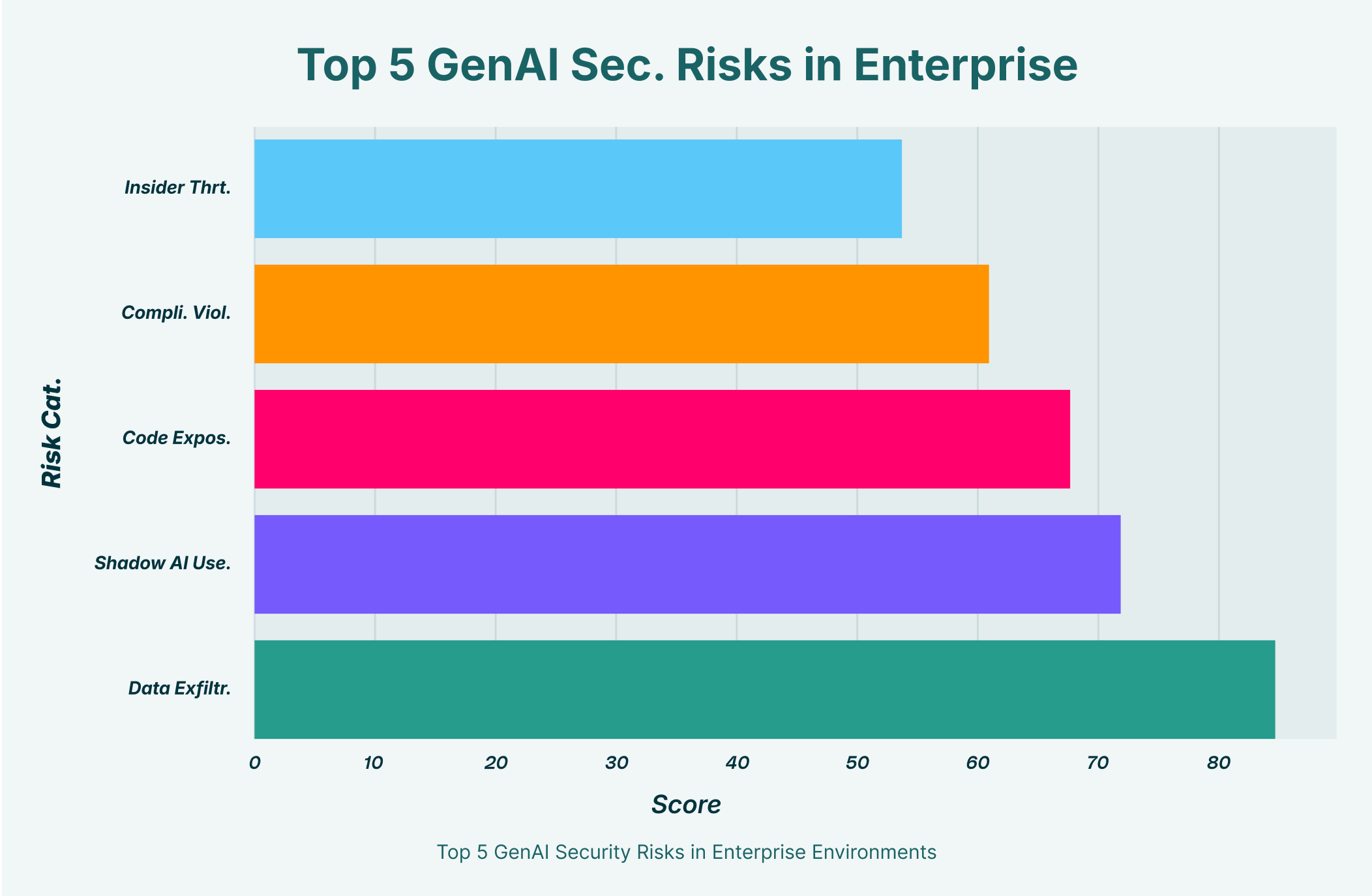

Imagine a product manager using a third-party GenAI tool to summarize user feedback. In the process, they inadvertently paste raw data containing thousands of customer email addresses and personal comments. Or consider a software engineer who, under a tight deadline, feeds a large block of proprietary code into a public model to find a bug. In both scenarios, the intent wasn’t malicious, but the result is a significant data leak. The challenge is that these actions look like standard web browsing to most security stacks. This is precisely where AI observability risk detection becomes essential, offering the specialized lens needed to identify these high-risk behaviors as they happen. Without it, organizations are flying blind, unable to quantify their exposure or enforce security policies effectively.

Defining AI Observability: From Usage to Insight

At its core, AI observability is the practice of gathering and analyzing detailed data from every interaction with AI systems to understand their behavior, performance, and security posture. It extends beyond simple usage tracking to provide granular details on three key pillars:

- Prompts: What specific questions, instructions, and data are users submitting to GenAI models? Analyzing prompts is the first step in identifying potential data leakage.

- Responses: What information are the AI models generating in return? Monitoring responses can help detect the generation of insecure code, harmful content, or misleading information.

- Data Flow: How is information moving between the user, their browser, and the AI service? Understanding this flow is critical to preventing sensitive files or data snippets from being uploaded.

Achieving this level of detail requires a dedicated approach to AI monitoring. Security and IT teams need tools that can intercept and analyze the content of these interactions, not just the metadata. This allows them to move from a reactive stance, where they discover data breaches after the fact, to a proactive one. By monitoring the substance of what is being shared with AI, they can apply risk-based controls that block sensitive data exfiltration before it ever leaves the organization’s control.

Core Components of an Effective AI Observability Framework

A robust AI observability strategy is built on several key technological capabilities working in concert. Each component addresses a different facet of the GenAI security challenge, from immediate threat prevention to long-term strategic governance.

Real-time Monitoring

The foundation of any effective security practice is visibility, and in the dynamic world of GenAI, that visibility must be in real-time monitoring. When an employee attempts to paste a sensitive customer list into a public LLM, the security team needs to know about it instantly, not in a report they review at the end of the week. Real-time monitoring provides immediate insight into user activities across all GenAI platforms, sanctioned or unsanctioned. This allows security policies to be enforced at the moment of risk, such as blocking an upload or warning a user, thereby preventing a potential data leak before it materializes.

AI-Powered Risk Detection

Simply monitoring data streams isn’t enough; organizations need an intelligent way to interpret that data. This is where AI-powered risk detection comes in. This technology utilizes machine learning models trained to recognize specific risk patterns within GenAI interactions. It can identify and classify sensitive data types, such as source code, API keys, PII, and financial information, within user prompts. Furthermore, it can analyze user behavior to flag anomalous activities, such as an unusually large data upload or an employee accessing a new, unsanctioned AI tool for the first time. This intelligent layer of analysis transforms raw monitoring data into actionable security alerts, focusing analyst attention on the events that matter most.

Behavior Auditing

For compliance, forensic analysis, and long-term governance, a detailed audit trail is non-negotiable. Behavior auditing involves creating an immutable, context-rich log of all GenAI interactions across the enterprise. This goes beyond a simple access log; it should capture the user, device, application, the full content of the prompt, and the resulting security event (e.g., “Blocked,” “Warned,” “Allowed”). This comprehensive record is invaluable for investigating incidents, demonstrating compliance with regulations like GDPR or CCPA, and understanding exactly how these powerful tools are being utilized. Should a security incident occur, a detailed audit trail from behavior auditing provides the forensic evidence needed to understand the scope of the breach and remediate the issue.

From Data to Decisions: The Power of AI-Driven Observability Insights

The ultimate goal of observability is not just to collect data but to derive intelligence from it. By aggregating and analyzing the information gathered through monitoring and risk detection, organizations can unlock AI-driven observability insights. These insights provide a strategic, bird’s-eye view of the entire GenAI ecosystem within the enterprise.

Security leaders can answer critical questions such as:

- Which SaaS-based GenAI tools are most popular among employees?

- Are there specific departments or user groups exhibiting higher-risk behaviors?

- What are the most common categories of sensitive data that employees are attempting to use with AI?

- Which AI applications are providing the most business value, justifying formal sanctioning and investment?

These AI-driven observability insights enable a shift from purely reactive security enforcement to strategic, data-informed governance. They empower CISOs to have meaningful conversations with business leaders about risk appetite, develop targeted user training programs, and craft security policies that are based on actual usage patterns rather than assumptions. This transforms the security function from a blocker of innovation into an enabler of secure and responsible AI adoption.

Implementation Models: The Browser-Layer Advantage

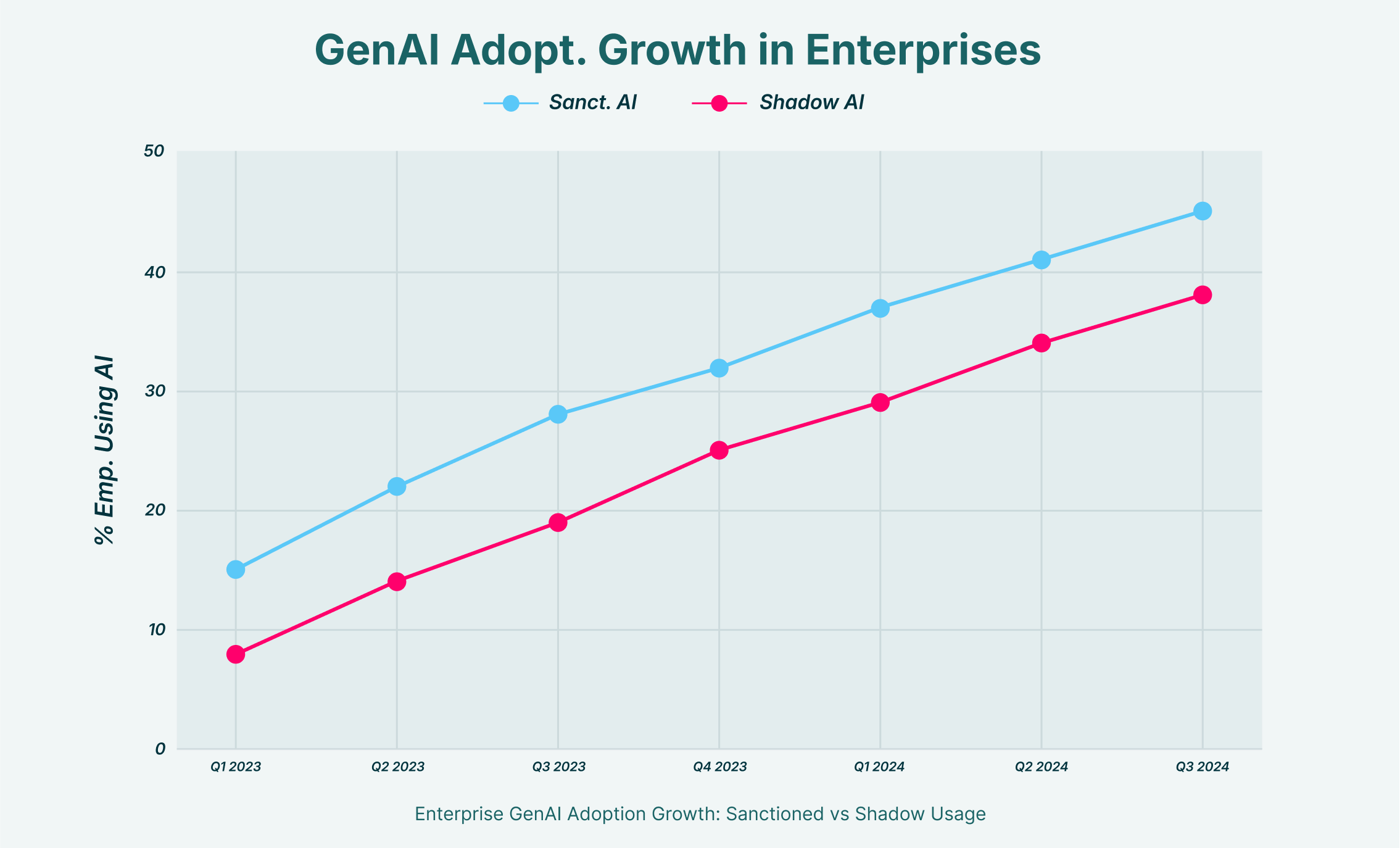

When it comes to implementing AI observability, two primary technical approaches emerge: API-level integration and browser-level monitoring. While API integration can provide deep visibility into sanctioned models, it leaves a critical gap: shadow AI. Employees are free to use any GenAI tool available through their web browser, and these unsanctioned applications will remain completely invisible to an API-only solution.

This is where a browser-based approach, like LayerX Security’s enterprise browser extension, provides a definitive advantage. By operating directly within the browser, the solution can monitor all web-based GenAI activity, regardless of the application. It sees every prompt and every file upload to any site, effectively eliminating the shadow AI blind spot. This allows organizations to apply a consistent layer of AI monitoring and AI-powered risk detection across every tool their employees might use, from established platforms like ChatGPT to obscure, new entrants. It offers the comprehensive coverage needed to truly manage enterprise-wide GenAI risk.