The integration of Generative AI (GenAI) into enterprise workflows marks a pivotal moment in business evolution. Companies are harnessing Large Language Models (LLMs) to accelerate innovation, automate complex processes, and unlock new efficiencies. Yet, this powerful technological wave carries with it a new class of sophisticated threats. Among the most insidious is the Denial of Wallet (DoW) attack, a malicious campaign engineered not to steal data or crash servers, but to systematically drain an organization’s financial resources. By exploiting the consumption-based pricing models of GenAI services, attackers can inflict significant monetary damage, often without triggering traditional security alarms.

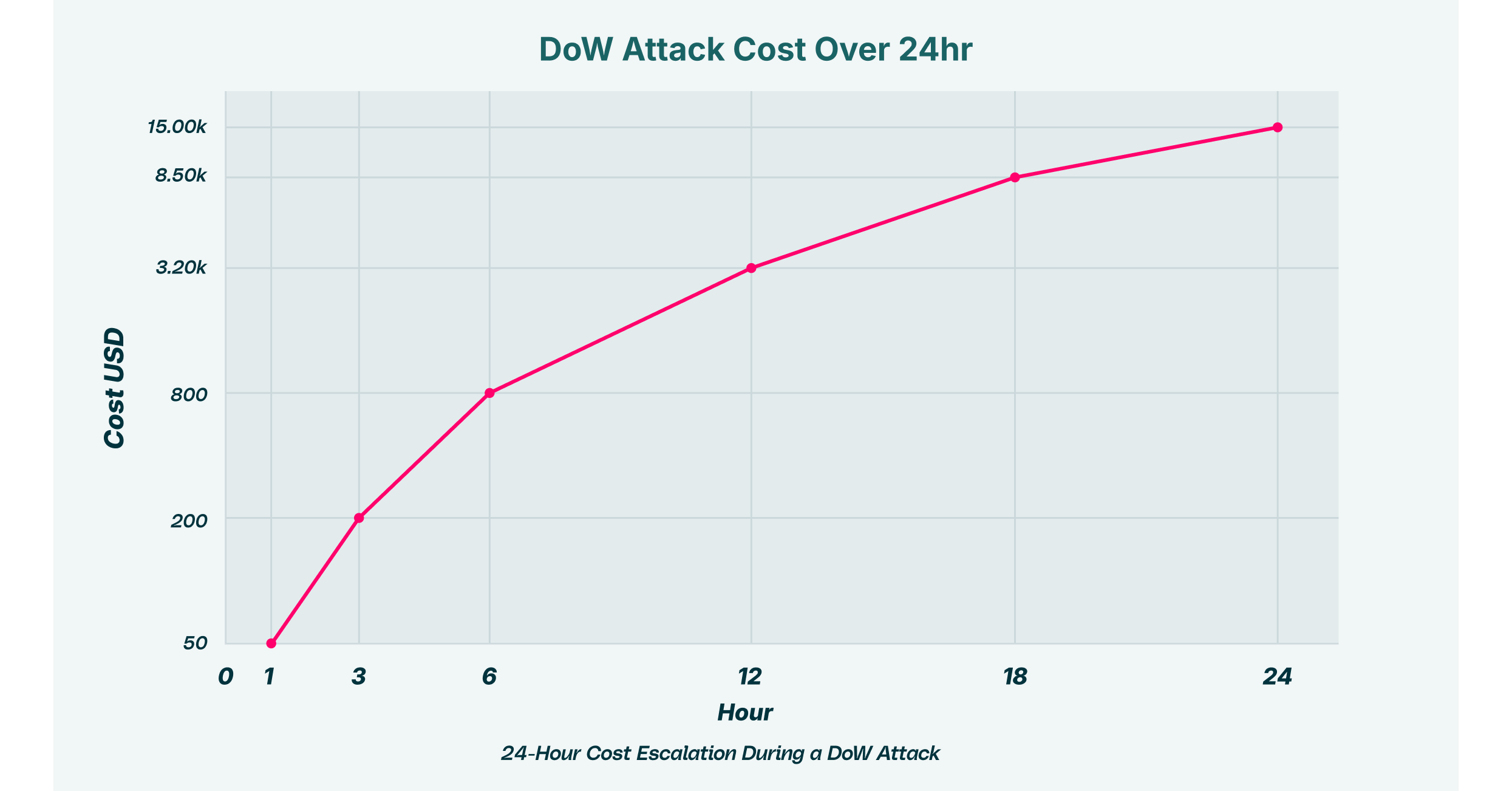

Consider a scenario: an adversary identifies a subtle flaw in a company’s public-facing, GenAI-powered customer support tool. By feeding it a specially crafted prompt, they force the application into a resource-intensive loop, generating lengthy, complex responses. Each cycle consumes processing tokens, and each token adds to the company’s monthly bill from its AI provider. What begins as a minor anomaly quickly escalates into a full-blown financial hemorrhage. This is the core of a DoW attack. This article explores the mechanics of these attacks, the severe GenAI financial risk they represent, and the modern defense strategies necessary to protect against unauthorized LLM usage and other forms of costly GenAI exploitation.

The New Frontier of Financial Cyber Threats: Understanding the DoW Attack

Unlike a traditional Denial of Service (DoS) attack, which aims to render a system unusable for legitimate users, a DoW attack has a different objective: weaponizing an organization’s own operational expenses against it. The foundation of this threat lies in the economic model of the GenAI ecosystem. Major providers like OpenAI, Google, AWS Bedrock, and Anthropic typically charge customers on a pay-per-use basis, billing for the number of “tokens”, the fundamental units of data an LLM processes, consumed during an interaction. A high-volume or complex query requires more tokens, directly increasing the cost.

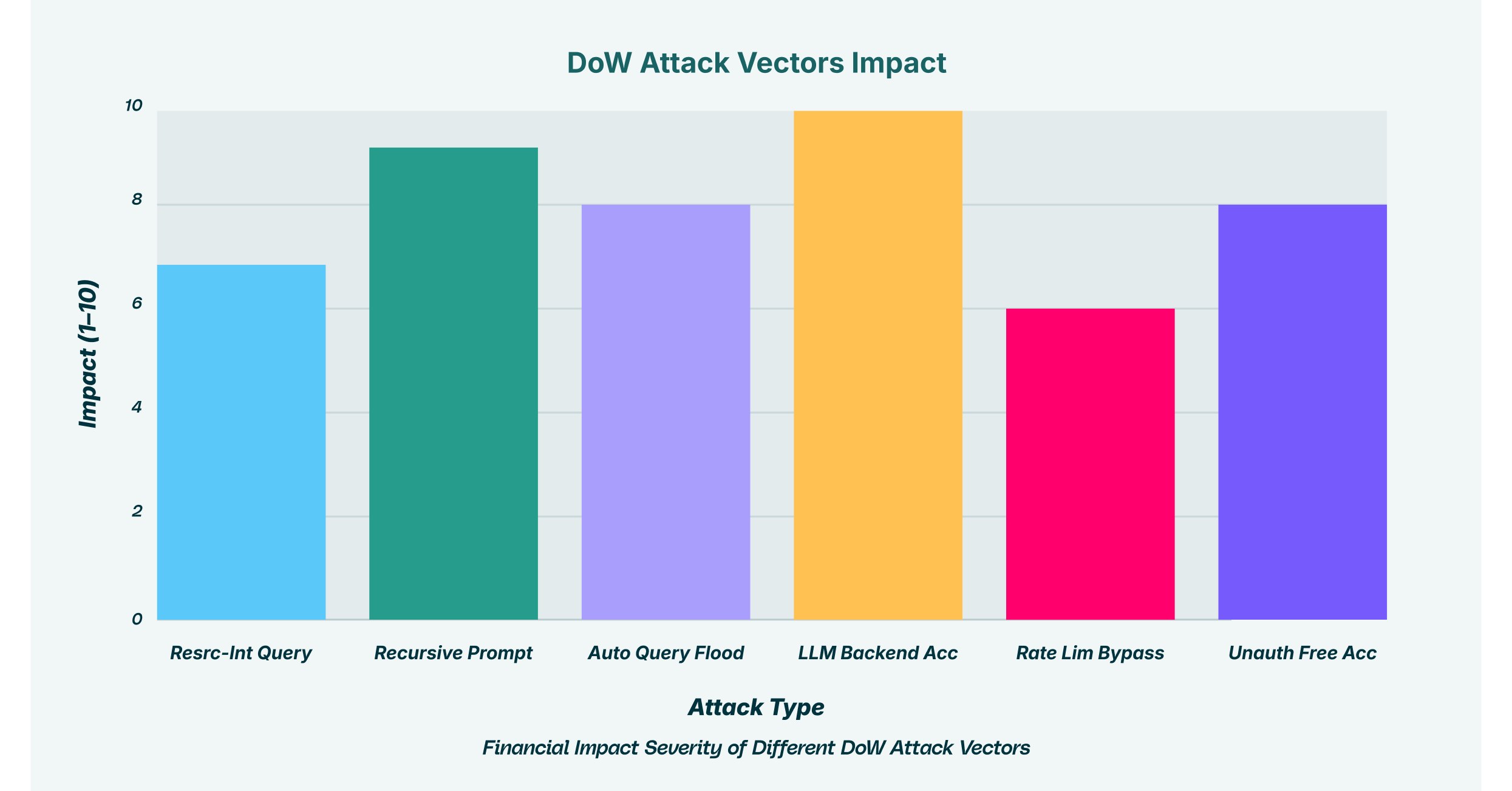

Attackers manipulate this model through various forms of LLM token abuse. They design inputs intended to maximize consumption, turning a predictable utility into a financial liability. This strategy of causing excessive token usage can cripple a GenAI budget in hours. Key techniques include:

- Resource-Intensive Queries: Attackers submit prompts that force the model to perform computationally expensive tasks, such as writing thousands of lines of code, analyzing vast documents, or solving complex mathematical problems.

- Recursive Prompting: A malicious user can craft an input that causes the LLM to enter a loop, where its own output becomes the input for the next query, leading to exponential growth in token use.

- Automated Query Flooding: Using simple scripts or automation frameworks, an adversary can bombard a GenAI endpoint with a high volume of requests, each incurring a small cost that rapidly accumulates.

When these methods are executed at scale from multiple sources simultaneously, they become a distributed DoW attack. This coordinated approach makes it difficult to trace the attack to a single origin, allowing the financial drain to continue unabated. These token consumption attacks are particularly dangerous because the malicious queries can appear as legitimate traffic, bypassing security systems that are not designed to analyze the intent or resource impact of a prompt.

The Attacker’s Playbook: Gaining Access and Exploiting Systems

A successful Denial of Wallet attack often hinges on an attacker’s ability to gain unimpeded access to a company’s GenAI resources. Their motivations vary, from pure disruption to a more calculated goal: gaining unauthorized free access to LLMs by hijacking another organization’s paid infrastructure. Once they bypass the initial defenses, they can exploit the system with impunity.

A primary target for attackers is the API layer. Sophisticated adversaries hunt for vulnerabilities that grant them LLM backend access, allowing them to communicate directly with the model and sidestep the safety protocols, system prompts, and usage controls built into the user-facing application. This level of access gives them free rein to execute costly operations. A prevalent technique for achieving this is “LLMjacking,” where attackers use stolen API keys or compromised non-human identity credentials to orchestrate unauthorized LLM usage. Research shows that once credentials are stolen, attackers first perform reconnaissance to see which AI models are available before launching their attack, carefully planning how to maximize damage or utility.

To amplify their efforts, attackers focus on achieving a rate limit bypass. API providers implement rate limits to prevent a single user or IP address from overwhelming a service with too many requests in a short period. However, determined attackers employ several methods to circumvent these protections, such as distributing requests across a botnet of compromised devices or using header manipulation techniques to trick the server into resetting the request counter. Overcoming these limits is a critical step in launching a large-scale, financially devastating attack.

Assessing the True Cost: The Cascade of GenAI Financial Risk

The damage from a DoW attack extends far beyond the initial, shocking invoice from a cloud provider. The ultimate impact is a cascade of financial, operational, and reputational harm. The most immediate consequence of unchecked token consumption is budget exhaustion, which directly leads to service unavailability. When the monthly or quarterly budget for a GenAI service is depleted, the service is often automatically suspended, disrupting critical business functions that rely on it, from internal productivity tools to customer-facing chatbots.

This introduces a significant resource consumption risk that must be managed as a core business continuity concern. Every department, from marketing to product development, may be leveraging GenAI, and a sudden shutdown can halt projects and impact revenue. The operational disruption is compounded by the fact that security teams must divert their attention to investigate and remediate the incident, pulling resources from other critical initiatives.

This risk is amplified by the pervasive issue of “Shadow AI,” where employees use unsanctioned AI tools through personal accounts. LayerX research has found that 67% of logins to GenAI tools in an enterprise setting are done via personal accounts. This creates a massive blind spot, as these interactions bypass corporate security controls entirely. An employee might inadvertently use an insecure third-party tool that becomes the vector for a DoW attack, or a compromised personal account could be used to abuse the company’s sanctioned AI services, all contributing to the risk of unauthorized LLM usage.

Why Traditional Security Falls Short and the Need for a New Defense Paradigm

Legacy security solutions are fundamentally ill-equipped to defend against Denial of Wallet attacks. Tools like firewalls, secure web gateways, and even many cloud access security brokers (CASBs) operate at the network or application identity level. They can see that a user is accessing a sanctioned tool like ChatGPT, but they have no visibility into the specifics of the interaction within the encrypted browser session. They cannot analyze the content of a prompt to determine if it is designed to trigger excessive token usage or if an employee is pasting sensitive data.

| Defense Method | DoW Attack Detection | GenAI Context Visibility |

| Traditional Firewall | No | None |

| Secure Web Gateway | Limited | Basic |

| Cloud Access Security Broker | Partial | Application-level |

| LayerX Browser Extension | Yes | Full Content Analysis |

Because these attacks often mimic legitimate user behavior, just at a higher volume or with greater complexity, they do not trigger alerts in traditional anomaly detection systems. The requests are valid, the users may be authenticated, and the destination is an approved application. The security stack is blind to the context and intent of the interaction, which is where the true risk resides. To effectively combat these threats, security must shift from the network to the last mile of the user journey: the browser itself.

Proactive Defense with LayerX: Securing GenAI from Financial Exploitation

LayerX provides a robust defense against Denial of Wallet attacks by securing the primary interface for GenAI interaction: the browser. The LayerX Enterprise Browser Extension delivers the granular visibility and contextual control needed to neutralize these threats before they can inflict financial damage. By analyzing every user interaction with any web-based application or GenAI tool, LayerX can identify and mitigate the full spectrum of risks.

This browser-centric approach allows security teams to enforce granular policies that directly counter the techniques used in token consumption attacks. For example, administrators can establish rules to:

- Prevent Excessive Token Usage: Set limits on the length or complexity of prompts that can be submitted to GenAI platforms.

- Block High-Risk Behaviors: Detect and terminate sessions where users attempt to submit recursive prompts or engage in other forms of LLM token abuse.

- Neutralize Shadow AI: Discover all GenAI tools being used across the enterprise, including unsanctioned ones, and apply consistent security policies to prevent unauthorized LLM usage.

- Mitigate Insider Risk: Prevent employees from pasting large volumes of data or sensitive information into GenAI prompts, protecting against both data exfiltration and accidental resource consumption.

With LayerX, organizations gain a comprehensive view of their GenAI attack surface. Security teams can see not only which tools are being used, but how they are being used, by whom, and with what data. This allows for the creation of proactive policies that align GenAI usage with business needs and budgetary realities, ensuring that this transformative technology can be adopted safely and sustainably.

As enterprises continue to integrate GenAI deeper into their operations, the financial incentives for attackers to launch Denial of Wallet attacks will only increase. The unique GenAI financial risk posed by these threats requires a paradigm shift in security thinking. Proactive, context-aware defenses that operate at the browser level are no longer optional but essential for protecting an organization’s resources and ensuring its investment in AI yields innovation, not financial ruin.