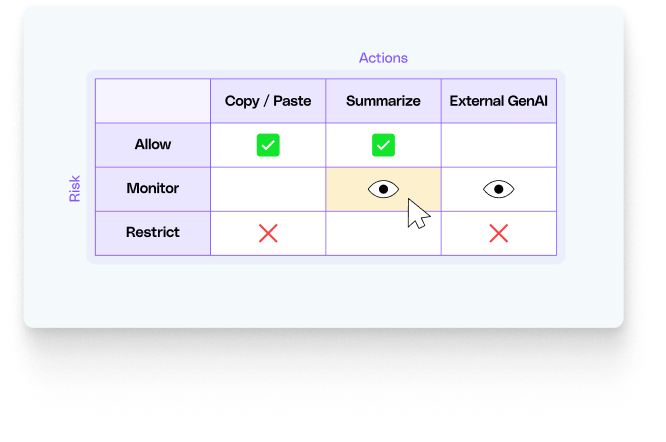

To implement this kind of granular, context-aware security, organizations are increasingly turning to solutions like LayerX . By operating directly within the browser, LayerX provides the deep visibility and real-time control needed to manage modern AI risks.

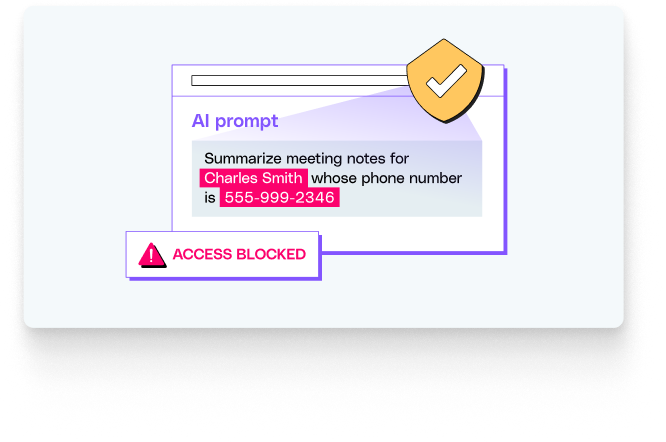

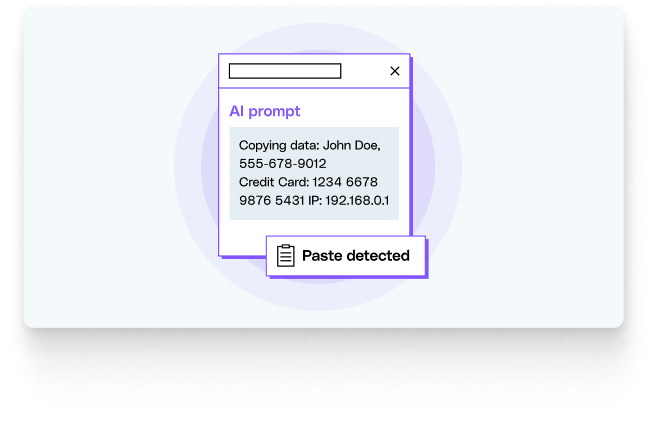

Imagine a scenario where a marketing employee is using an unauthorized AI tool to help draft a press release. They attempt to paste a document containing unannounced financial figures and customer names. A traditional security solution would likely be blind to this action. However, a browser-level solution like LayerX can: