Generative AI has fundamentally reshaped the way organizations operate. Teams across every department are adopting tools that promise to accelerate coding, content creation, and data analysis. This rapid adoption often happens without formal oversight. Employees are not waiting for permission. They are signing up for services using personal credentials and pasting sensitive corporate data into public models. This creates a massive, unmonitored ecosystem of “Shadow AI” that traditional firewalls cannot see or control.

Security leaders face a difficult challenge. Blocking AI entirely is often impractical and stifles innovation. Allowing unrestricted access invites data leakage and compliance violations. The solution lies in a well-structured enterprise AI policy. This document serves as the cornerstone of your governance strategy. It defines the rules of engagement. It establishes clear boundaries for what data can be shared and which tools are permissible. Without this framework, your organization remains vulnerable to data exfiltration, regulatory fines, and intellectual property theft.

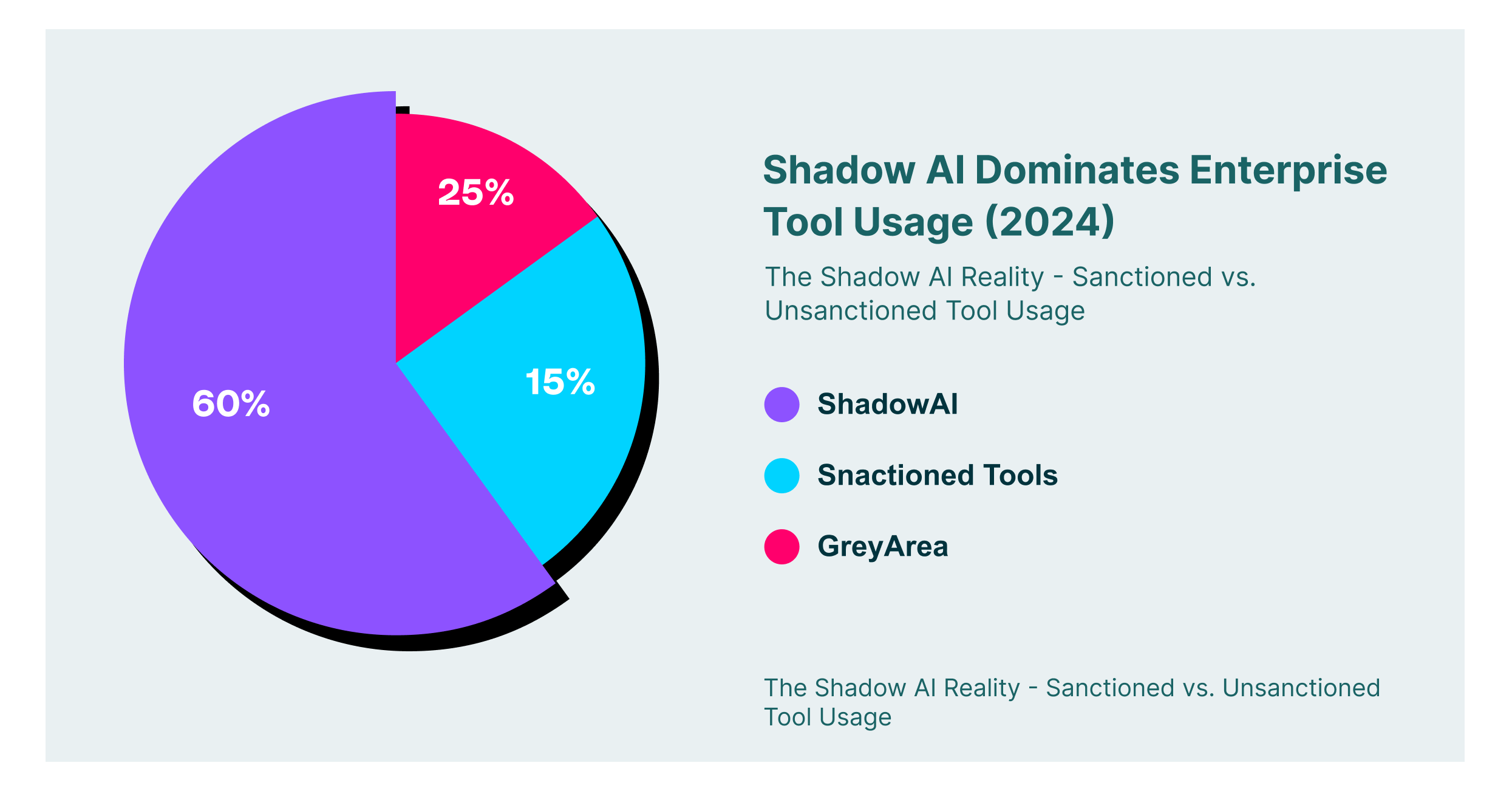

The Scale of the Shadow AI Problem

The disparity between what IT teams approve and what employees actually use is growing. Most organizations believe they have a handle on their software inventory. The reality is often quite different. Browser-based extensions and web applications bypass network perimeter controls. This allows data to flow directly from the user’s screen to third-party servers.

The Shadow AI Reality: Sanctioned vs. Unsanctioned Tool Usage

Security teams must recognize that “Shadow AI” is not just a nuisance. It is a critical vulnerability. Unsanctioned tools often lack enterprise-grade security controls. They may claim rights to use your data for model training. A comprehensive AI security policy is the first step in reclaiming visibility and control over this decentralized environment.

Core Pillars of an AI Security Policy Template

Creating a policy from scratch can be daunting. It helps to view the document as a set of modular components. Each module addresses a specific aspect of the user-AI interaction. A robust AI security policy template should not be a static list of “do nots.” It must be a dynamic framework that adapts to new tools and threats. It needs to cover identity, data, and application security in equal measure.

The first pillar is data classification. You cannot protect what you do not define. Your policy must explicitly state which categories of data are permissible for AI input. Public marketing copy might be safe. Customer PII and unreleased source code are definitely not. The policy must leave no room for ambiguity here. Users need to know exactly where the line is drawn before they open a prompt window.

Identity and Access Management

The second pillar focuses on who is using the tools. Anonymity is the enemy of security. Your AI security policy template should mandate the use of corporate identities for all business-related AI tasks. Personal email accounts should be strictly prohibited for enterprise work. This ensures that when an employee leaves the company, their access to the data and the history of their interactions remains with the organization. It also allows for Single Sign-On (SSO) integration. SSO provides a centralized kill switch if an account is compromised.

Application Vetting and Approval

The third pillar deals with the tools themselves. Not all AI applications are created equal. Some adhere to strict enterprise security standards. Others are little more than data harvesting operations wrapped in a slick interface. Your policy must establish a vetting process. This process should evaluate the vendor’s data handling practices. It should check if they use customer data for training. It should verify their compliance with standards like SOC 2 or ISO 27001. Only tools that pass this gauntlet should be granted “sanctioned” status.

Establishing AI Acceptable Use Standards

An AI acceptable use section translates high-level principles into daily actions. This is the part of the policy that employees will read most often. It needs to be clear, concise, and devoid of legal jargon. It should focus on behavior. It should describe specific scenarios that users encounter in their daily workflows.

For example, developers often use AI to debug code. An AI acceptable use policy might permit pasting snippets of generic logic. It would strictly ban pasting proprietary algorithms or hard-coded API keys. Marketing teams might use AI to draft emails. The policy would allow this but require a human review of the final output to check for accuracy and tone. These practical examples help employees understand how to apply the rules in their specific context.

The “Traffic Light” Protocol

To simplify decision-making, many organizations adopt a traffic light system within their AI acceptable use guidelines.

- Green (Sanctioned): These tools have enterprise licenses. Data protection agreements are signed. Employees can use them freely for most data types, excluding top-secret information.

- Yellow (Tolerated): These are public tools that are useful but lack enterprise controls. Usage is permitted for non-sensitive tasks only. No login is required. No internal data can be input.

- Red (Blocked): These tools pose unacceptable risks. They may have a history of security breaches or aggressive data scraping policies. Access is technically blocked at the browser or network level.

Navigating Generative AI Security Policy Risks

GenAI introduces unique risks that traditional software policies do not cover. The interactive nature of Large Language Models (LLMs) creates new attack vectors. A specialized generative AI security policy must address these specific threats. It is not enough to secure the access; you must secure the interaction itself.

One major concern is prompt injection. This occurs when malicious instructions are hidden within a block of text. If an employee pastes this text into an LLM, the model might be tricked into ignoring its safety guardrails. Your policy needs to warn users against pasting untrusted content from the web directly into internal AI tools. It should treat external text with the same suspicion as an email attachment from an unknown sender.

Managing Hallucinations and Output Integrity

Another critical aspect of a generative AI security policy is the validation of output. AI models are probabilistic, not deterministic. They can confidently present false information as fact. This phenomenon is known as hallucination. Using unverified AI output in critical business decisions can lead to financial loss and reputational damage. The policy must mandate a “human-in-the-loop” for all high-value outputs. Code generated by AI must be reviewed by a senior engineer. Legal documents drafted by AI must be verified by counsel.

Common AI Security Policy Violations in Enterprise Environments

AI Safety Policy and Governance Frameworks

Ad-hoc rules are a good start, but long-term resilience requires a structured approach. Aligning with international standards elevates your AI safety policy from a set of rules to a management system. This demonstrates due diligence to regulators, customers, and board members. It shifts the focus from reactive firefighting to proactive risk management.

Two primary frameworks are currently shaping the industry conversation: ISO 42001 and the NIST AI Risk Management Framework (RMF). Adopting elements from these standards ensures that your AI safety policy and governance strategy are comprehensive and defensible. It provides a common language for discussing AI risk across the organization.

Implementing ISO 42001

ISO 42001 is the global standard for AI Management Systems. It follows the familiar Plan-Do-Check-Act cycle found in other ISO standards. For an AI safety policy and governance program, the most relevant clauses are often Leadership and Operation.

- Leadership: This clause requires top management to take accountability for the effectiveness of the AI management system. It ensures that resources are available and that the policy aligns with strategic business objectives.

- Operation: This clause focuses on the assessment and treatment of AI risks. It mandates that organizations identify potential unintended consequences of AI systems and implement controls to mitigate them.

Applying the NIST AI RMF

The NIST AI RMF is a voluntary framework that is highly regarded in the US public and private sectors. It is organized around four core functions: Govern, Map, Measure, and Manage.

- Govern: This function fosters a culture of risk management. It involves establishing the policies and procedures that guide AI development and use.

- Map: This function establishes the context. It identifies the specific AI systems in use and the potential risks associated with them.

- Measure: This function employs quantitative and qualitative methods to analyze risks. It answers the question: “How much risk are we actually carrying?”

- Manage: This function prioritizes and treats the risks. It involves the deployment of specific controls, such as the AI acceptable use policy itself, to bring risk down to an acceptable level.

The Role of Browser Security in Enforcement

Writing an enterprise AI policy is only half the battle. Enforcing it is the other half. Traditional network security tools struggle with modern AI usage. They can block a domain, but they cannot see what happens inside the session. They cannot distinguish between a user asking ChatGPT for a cookie recipe and a user pasting a customer database. This lack of granularity leads to over-blocking or dangerous blind spots.

The browser is the new perimeter. It is the interface through which almost all modern work occurs. To effectively enforce an AI security policy, you need controls that sit within the browser itself. This allows for real-time analysis of user actions. It enables context-aware decisions that balance security with productivity. Instead of a binary “block or allow,” browser security can offer nuanced controls like “allow read-only” or “allow but redact sensitive data”.

Automating Policy with LayerX

LayerX provides the technical capabilities to turn your AI security policy into reality. It operates as an enterprise browser extension. This unique position allows it to monitor and control user interactions with any web application, including sanctioned and unsanctioned AI tools. LayerX acts as the enforcement arm of your governance strategy.

One of the key capabilities of LayerX is the discovery of “Shadow AI.” It automatically catalogs every AI site accessed by your employees. It provides a comprehensive inventory that allows you to see exactly which tools are in use. This visibility is essential for updating your AI security policy template and keeping it relevant. You cannot govern what you do not know exists. LayerX illuminates the dark corners of your SaaS ecosystem.

Real-Time DLP and Education

LayerX goes beyond simple discovery. It offers active Data Loss Prevention (DLP) capabilities tailored for GenAI. When a user attempts to paste data that violates the AI acceptable use policy, LayerX intervenes. It can block the paste action entirely. It can also selectively redact sensitive strings, such as credit card numbers or PII, while allowing the rest of the prompt to proceed. This allows employees to use the tool safely without exposing the organization to risk. Furthermore, LayerX can display a custom pop-up explaining the violation. This turns every blocked action into a micro-training moment, reinforcing the AI safety policy in real-time.

AI Safety Policy Implementation Checklist

Use the following checklist to ensure that your policy covers all critical areas. This table maps specific policy components to the technical controls required to enforce them.

| Policy Component | Key Requirement | LayerX Enforcement Capability |

| Tool Inventory | Maintain a live list of sanctioned and blocked tools. | Real-time discovery of Shadow SaaS and AI apps across the enterprise. |

| Data DLP | Define specific data types banned from AI input. | Prevent pasting or uploading of defined sensitive data types |

| Identity Mgmt | Mandate corporate SSO for all AI accounts. | Detect and block usage of personal email accounts for business apps. |

| Extension Control | Vette all browser plugins accessing AI data. | Risk scoring and blocking of malicious or risky extensions. |

| Audit Trail | Log all prompts and AI interactions for compliance. | Granular forensic logs of user-AI interactions for audit purposes |

| Role-Based Access | Define who can use which tool and how. | Enforce access policies based on user groups and Active Directory. |

Looking Forward to AI’s Adoption in Enterprise Security

The adoption of Generative AI is not a trend that will pass. It is a fundamental shift in how business is done. Your enterprise AI policy is the mechanism that allows your organization to navigate this shift safely. It is not about creating hurdles. It is about building guardrails. By defining clear AI acceptable use guidelines and backing them with strong technical controls, you empower your workforce to innovate.

Security in the AI era requires a combination of clear governance and active enforcement. A static AI security policy template is a good starting point, but it must be operationalized. LayerX provides the browser-level visibility and control necessary to make your policy effective. It bridges the gap between the document and the user. It ensures that your AI safety policy and governance efforts result in actual risk reduction. Start with visibility, define your rules, and enforce them at the edge. This is the path to secure AI adoption.