Effective security now demands that we control how employees interact with generative models, not just the models themselves. The top 10 AI governance practices for 2026 focus on securing the “last mile” of user adoption, where data leaves the enterprise and enters the browser.

What Are AI Governance Practices and Why They Matter

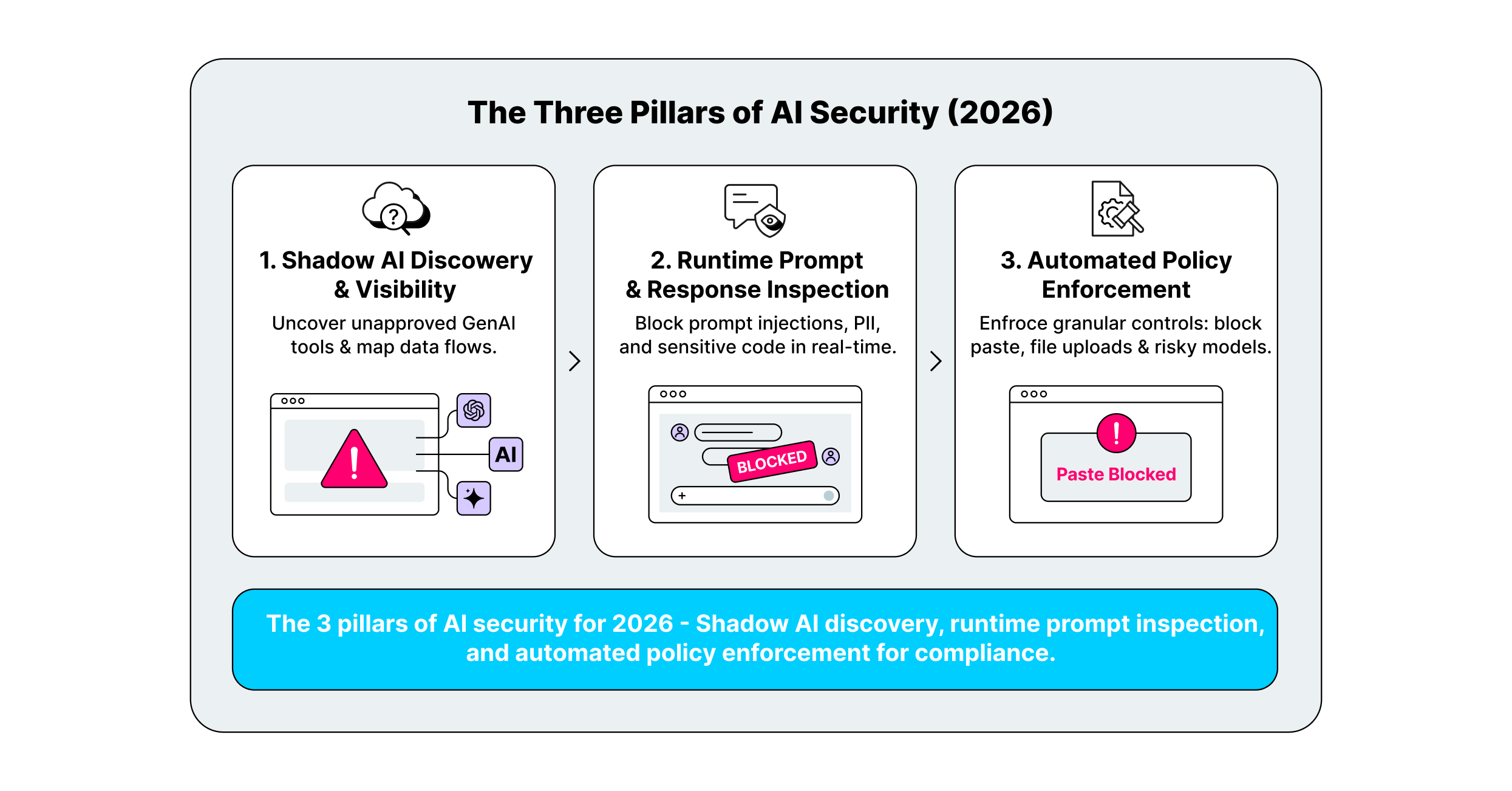

AI governance is no longer a theoretical framework; it is an active security workflow designed to manage the risks of generative AI consumption. These practices shift the focus from validating model weights to controlling the “point of use.” Security teams must now govern the exact moment an employee pastes customer data into a chatbot or installs an AI-powered browser extension.

The most critical gap in modern governance is the “last mile”; the browser interface where users interact with SaaS and GenAI tools. Traditional network defenses cannot see encrypted traffic inside a chat session, and API-based controls often react too late. Effective governance requires real-time visibility and enforcement directly within the employee’s workspace to prevent data leakage and block malicious inputs before they are processed.

Key AI Governance Trends to Watch in 2026

The shift toward browser-native security controls is the dominant trend for 2026. Security leaders are moving away from clunky network proxies that break user experience and toward lightweight browser extensions. These tools sit directly in the workflow, allowing organizations to monitor prompt context, inspect extension permissions, and redact sensitive data without rerouting traffic.

Another major development is the rise of identity-aware governance for autonomous AI agents. As “agentic AI” begins to act on behalf of users, booking meetings, writing code, or querying databases, static allowlists are failing. Governance strategies are evolving to enforce role-based access controls (RBAC) that limit what an AI agent can do based on the user’s specific privileges and the data’s sensitivity.

10 Best AI Governance Tools for 2026

Below are the top solutions enabling safe AI adoption through enforcement, visibility, and risk management.

| Practice | Key Focus | Best for |

| LayerX | Browser-Based Enforcement | Closing the “last mile” security gap |

| Harmonic Security | Shadow AI Discovery | Gaining full visibility into AI adoption |

| Prompt Security | Prompt Injection Defense | Securing GenAI interactions from misuse |

| Lasso Security | Role-Based Access Control | Context-aware policy enforcement |

| Nightfall AI | Data Loss Prevention (DLP) | Preventing PII/IP leakage in real-time |

| AIM Security | AI Asset Inventory | Centralized tracking of all AI tools |

| Witness AI | Automated Risk Scoring | Streamlining approval of safe AI tools |

| Knostic | Audit & Compliance Logging | Meeting regulatory requirements |

| Polymer | Employee Training & Awareness | Building a security-conscious culture |

| Lakera | Continuous Monitoring | Detecting anomalies & policy drift |

1. LayerX

LayerX is a browser security platform that operates as a lightweight extension, placing governance controls directly where users interact with AI. It addresses the “last mile” gap by monitoring every keystroke, paste action, and file upload in real-time. This allows security teams to enforce granular policies, such as blocking the pasting of source code into ChatGPT or preventing the installation of high-risk AI extensions, without disrupting the user’s workflow or requiring a dedicated enterprise browser.

The platform excels at providing deep visibility into both sanctioned and “Shadow AI” usage across managed and BYOD devices. LayerX analyzes the context of browser interactions to distinguish between safe tasks and risky behaviors, ensuring that sensitive corporate data never leaves the browser environment unprotected. Its approach enables organizations to adopt GenAI tools safely by mitigating risks like data exfiltration and account takeovers at the source.

2. Harmonic Security

Harmonic Security focuses on solving the Shadow AI problem by identifying and categorizing every AI tool employees use, regardless of whether it has been formally approved. Instead of relying on static blocklists, Harmonic uses specialized small language models to analyze the intent and content of data transfers. This allows it to distinguish between a harmless query and a risky upload of regulated data, enabling a “safe-by-default” posture that empowers employees to use new tools without exposing the organization to risk.

The platform builds a comprehensive map of AI adoption across the enterprise, giving security leaders clear insight into which departments are using which tools. By understanding the business context of AI usage, Harmonic helps teams draft policies that support innovation while automatically flagging or blocking high-risk applications that do not meet security standards.

3. Prompt Security

Prompt Security specializes in defending against prompt injection attacks, a critical vulnerability where malicious inputs manipulate GenAI behavior. Their solution monitors the Document Object Model (DOM) and user inputs to detect attempts to jailbreak models or exfiltrate data via hidden commands. This focus makes them an essential layer of defense for organizations building or deploying public-facing GenAI applications where user input cannot be fully trusted.

Beyond injection defense, Prompt Security provides tools to sanitize inputs and verify outputs, ensuring that LLMs do not inadvertently generate harmful content or reveal system instructions. Their technology integrates into the development pipeline, helping engineering teams secure their AI features before they reach production.

4. Lasso Security

Lasso Security delivers contextual Role-Based Access Control (RBAC) for GenAI, ensuring that users can only access the models and data appropriate for their specific job function. Their platform moves beyond simple access logs to enforce policies based on the user’s identity, the data’s sensitivity, and the intended use case. This granular control prevents “access creep,” where employees retain access to powerful AI tools they no longer need.

The solution also monitors for anomalies in real-time, such as a marketing employee suddenly querying a coding assistant for database credentials. By correlating user identity with behavioral patterns, Lasso helps organizations detect and stop internal misuse of AI tools before they result in a data breach.

5. Nightfall AI

Nightfall AI brings advanced Data Loss Prevention (DLP) to the AI era, using machine learning detectors trained on millions of samples to identify sensitive data with high precision. Their solution scans data in motion and at rest, detecting PII, health records, and secrets like API keys before they are uploaded to GenAI platforms. Nightfall’s detectors are tuned to understand context, significantly reducing false positives compared to traditional regex-based DLP tools.

For AI governance, Nightfall integrates with browser and cloud workflows to redact or block sensitive information in real-time. This capability allows employees to use productivity tools like chatbots while ensuring that compliance mandates like GDPR and HIPAA are strictly followed, even in unstructured prompts.

6. AIM Security

AIM Security focuses on creating a complete AI Asset Inventory, effectively serving as an “AI Bill of Materials” for the enterprise. Their platform scans the IT environment to discover all deployed models, training datasets, and AI-integrated applications. This centralized view allows security teams to track the lifecycle of every AI asset, from procurement to decommissioning, ensuring no “zombie” models are left running without oversight.

By maintaining a real-time inventory, AIM Security helps organizations identify dependencies and potential supply chain risks. If a specific open-source model is found to have a vulnerability, administrators can instantly locate every instance of that model within their infrastructure and apply necessary patches or mitigations.

7. Witness AI

Witness AI provides automated risk scoring to streamline the evaluation and approval of new AI tools. Their platform assigns a dynamic risk score to applications based on their terms of service, data handling practices, and compliance certifications. This allows security teams to quickly vet requests for new software, replacing lengthy manual reviews with data-driven decisions.

The platform also continuously monitors the risk posture of approved tools, alerting administrators if a vendor changes its privacy policy or suffers a security incident. This ongoing assessment ensures that the organization’s approved software list remains accurate and secure over time.

8. Knostic

Knostic addresses the challenge of audit and authorization by logging exactly who is accessing what knowledge within an organization’s AI systems. Their solution answers the “need to know” question, ensuring that GenAI tools do not bypass existing file permissions to surface confidential documents to unauthorized users. Knostic generates detailed audit trails that map prompts to the specific documents used to generate the answer.

This level of transparency is vital for regulated industries that must demonstrate strict control over information flow. Knostic’s authorization controls prevent “knowledge leakage,” where an LLM inadvertently reveals sensitive strategy decisions or HR data to employees who should not have access to that information.

9. Polymer

Polymer takes a human-centric approach to governance by using “nudges” and real-time training to build a security-conscious culture. Instead of simply blocking a risky action, Polymer’s system intervenes with a pop-up explaining why the action is risky and suggesting a safer alternative. This “in-the-moment” education helps reduce alert fatigue and encourages employees to become active participants in the security process.

Their platform is particularly effective for organizations looking to reduce the burden on their SOC teams. By empowering users to self-correct low-risk mistakes, Polymer allows security analysts to focus on genuine threats while steadily improving the organization’s overall data handling habits.

10. Lakera

Lakera specializes in continuous monitoring and “red teaming” for AI applications to detect policy drift and adversarial attacks. Their platform, Lakera Guard, acts as a firewall for LLMs, sitting between the user and the model to filter out prompt injections, jailbreaks, and toxic inputs. This continuous testing ensures that AI models remain aligned with safety guidelines even as attackers evolve their techniques.

Lakera also provides a database of known prompts and attack vectors, allowing organizations to benchmark their defenses against the latest threats. This proactive stance helps developers identify weaknesses in their AI applications before they are deployed to production, reducing the risk of a public security failure.

How to Choose the Best AI Governance Provider

- Prioritize visibility into “Shadow AI” to understand the full scope of unmanaged tools your employees are already using.

- Select a solution with browser-native enforcement to secure data at the point of input without routing traffic through complex proxies.

- Ensure the tool offers granular, identity-based controls so you can enable different access levels for developers, HR, and marketing.

- Look for automated remediation capabilities that can redact sensitive data in real-time rather than just blocking the entire application.

- Verify that the provider supports continuous compliance auditing to satisfy regulatory standards like ISO 42001 and the EU AI Act.

FAQs

What is AI governance in practical security terms?

In practical terms, AI governance is the set of technical controls and policies that dictate how employees and applications interact with generative AI. It involves monitoring prompts for sensitive data, verifying the security posture of AI vendors, and ensuring that AI-generated outputs are accurate and safe to use.

How is AI governance different from AI model security?

AI model security focuses on protecting the weights, parameters, and infrastructure of the model itself from theft or tampering. AI governance is broader, focusing on the usage of the model; ensuring that the data feeding into it is compliant, the users accessing it are authorized, and the business risks of deployment are managed.

What should we control first: prompts, files, or tool access?

You should control visibility and tool access first. You cannot govern what you cannot see, so identifying which tools are in use (Shadow AI) is the foundational step. Once visibility is established, you can implement controls for prompts and file uploads to prevent data leakage.

Do we need a dedicated enterprise browser for AI governance?

No, you do not need a dedicated enterprise browser. Modern browser security platforms like LayerX function as extensions that sit on top of standard browsers like Chrome and Edge. This allows you to apply enterprise-grade governance and security controls without forcing users to switch to a new, unfamiliar browser interface.

How do you measure AI governance effectiveness?

Effectiveness is measured by the reduction in “Shadow AI” incidents, the speed of approving new safe tools, and the number of prevented data leaks. Successful governance should also be tracked by user adoption rates; if employees are bypassing controls to do their jobs, the governance strategy needs adjustment.