The rapid adoption of Generative AI (GenAI) has introduced a complex layer of risk that traditional security perimeters cannot adequately address. For modern enterprises, the challenge is no longer just about securing the network; it is about securing the browser, the primary workspace where employees interact with AI tools. As organizations race to apply these advanced technologies, the need for comprehensive AI security monitoring has moved from a “nice-to-have” to a critical operational requirement.

Security teams today face a dual challenge. They must enable the productivity gains of GenAI while simultaneously neutralizing the risks of data leakage and identity theft. This balance requires a departure from legacy network-based controls. It demands a strategy that operationalizes AI governance monitoring directly at the point of interaction: the browser.

The Blind Spot: Why Traditional Network Security Fails GenAI

For decades, AI for network security and monitoring focused on the perimeter. Firewalls, Secure Web Gateways (SWG), and Cloud Access Security Brokers (CASB) were designed to inspect traffic flowing in and out of the corporate network. However, GenAI interactions are nuanced. They often involve encrypted sessions (HTTPS) where the payload, the actual conversation between the user and the AI model, remains opaque to network-level inspection.

Traditional tools struggle to differentiate between a harmless query like “Draft a marketing email” and a high-risk prompt such as “Debug this proprietary code snippet.” To a firewall, both look like generic HTTPS traffic to openai.com or anthropic.com. This visibility gap creates a dangerous blind spot. Security teams are left guessing, unable to see the context of the data leaving their organization.

LayerX addresses this fundamental flaw. By placing the sensor and enforcer within the browser extension itself, LayerX gains clear text visibility into every interaction. This allows for granular AI monitoring that captures the intent and content of user actions before encryption occurs.

Visualizing the Visibility Gap

To understand the magnitude of this issue, compare the detection capabilities of legacy solutions versus a browser-centric approach. While network tools effectively filter known malicious domains, they fail to inspect the content of sanctioned sessions.

Unveiling the Shadow: AI Governance Monitoring for the Modern Enterprise

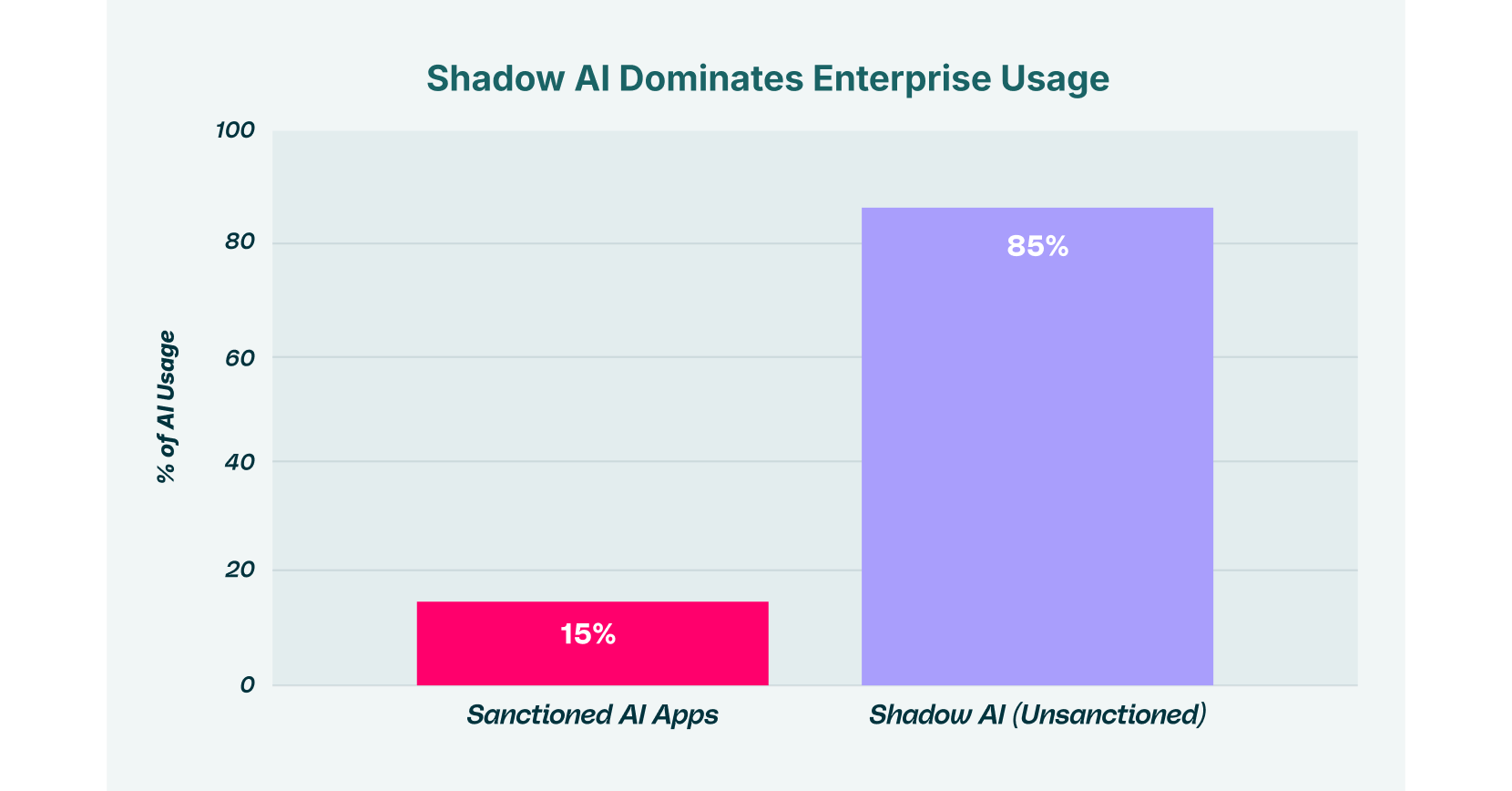

Shadow IT has always been a headache for CISOs. Shadow AI is a migraine. Employees, eager to improve efficiency, often sign up for unapproved AI tools using personal email accounts. These “Shadow SaaS ecosystems” bypass corporate Single Sign-On (SSO) and identity providers, leaving the organization with no audit trail and no control over where its data resides.

Effective AI governance monitoring must start with discovery. You cannot govern what you cannot see. LayerX’s browser extension automatically catalogs every AI application accessed by the workforce. It distinguishes between corporate-sanctioned accounts and personal accounts, flagging instances where an employee might be using a personal ChatGPT account to process corporate data.

This level of insight is crucial. It transforms AI monitoring tools from passive reporting mechanisms into active governance engines. Instead of finding out about a data leak months later, security teams can see the growth of shadow AI in real-time and take immediate action to block or sanction specific tools.

AI Monitoring Tools: From Passive Observation to Active Defense

The market is flooded with AI monitoring tools that promise visibility but deliver only noise. True security requires context. It is not enough to know that a user visited an AI site; you must know what they did there.

LayerX introduces “Full Conversation Tracking,” a capability that captures the full context of GenAI interactions. This includes the user’s prompt, the AI’s response, and any files uploaded for analysis. This data is essential for forensic investigations and compliance audits. If an incident occurs, the security team can reconstruct the entire session to determine exactly what information was exposed.

Consider a hypothetical scenario. A developer works on a deadline. To speed up the process, they copy a block of source code containing hardcoded API keys and paste it into a GenAI chatbot for optimization.

- Without LayerX: The traffic is encrypted. The DLP solution sees nothing. The code leaks.

- With LayerX: The browser extension analyzes the clipboard content in real-time. It recognizes the pattern of an API key and the destination as an AI tool. The action is blocked instantly, and the user receives a coaching pop-up explaining the policy violation.

This is the difference between logging a disaster and preventing one.

Preventing Data Exfiltration in the GenAI Era

GenAI-powered exfiltration is a sophisticated threat. It does not always look like a malicious attack. Often, it is an insider threat born of negligence. Employees do not intend to leak data; they simply want to get their work done. However, the result is the same: sensitive PII, intellectual property, and financial data end up in the training models of public AI providers.

To combat this, organizations must operationalize AI security monitoring that focuses on data movement. LayerX enforces “browser-to-cloud attack surface” defenses. By monitoring key events, copying, pasting, typing, and file uploading, the extension can intercede at the exact moment of risk.

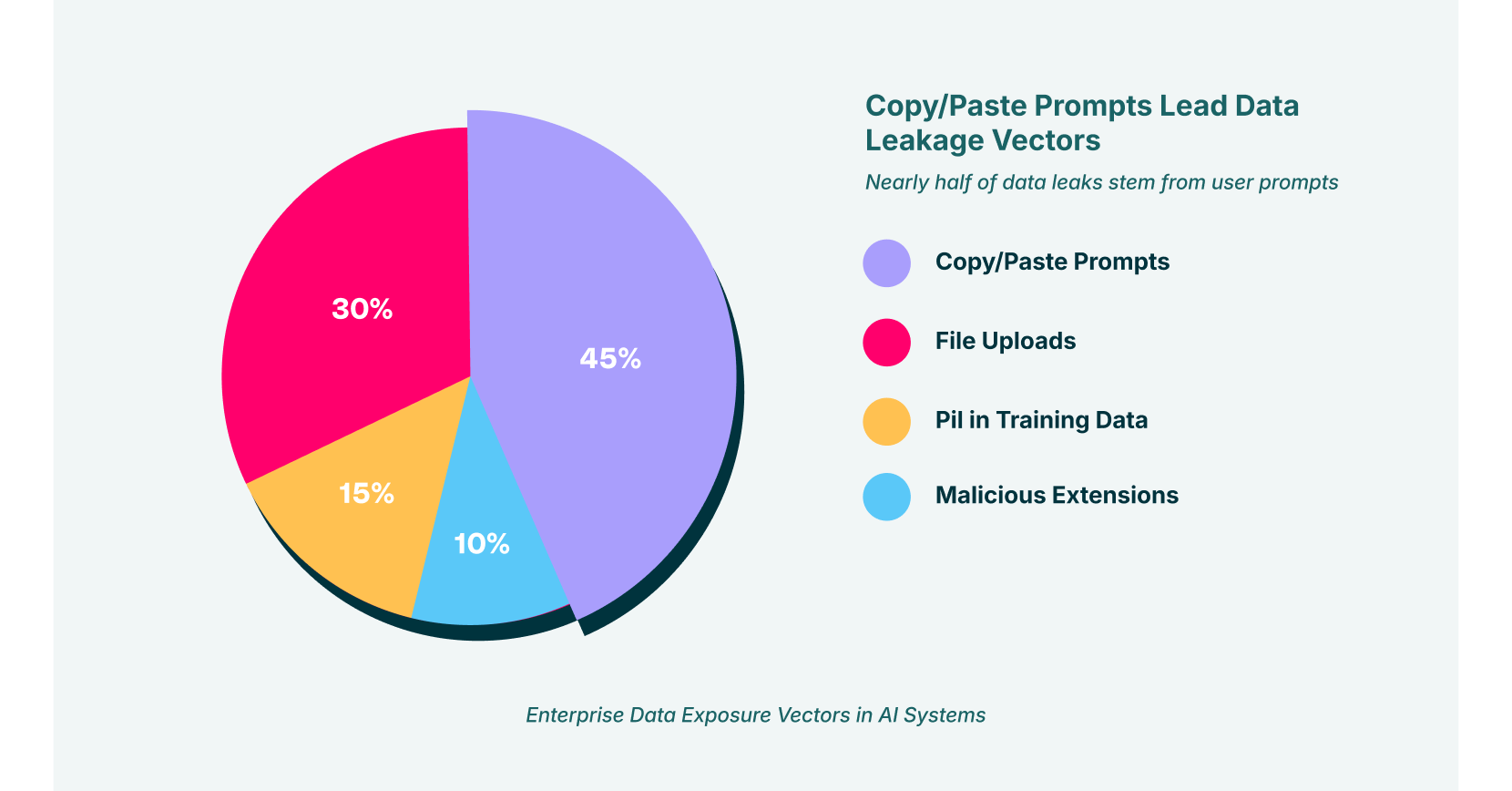

Analyzing the Vectors of Leakage

Data does not leak itself. It travels through specific vectors. Understanding these vectors is the first step toward closing them.

Operationalizing Risk-Based Policies

A “block all” approach is unsustainable. It stifles innovation and encourages employees to find dangerous workarounds. A comprehensive AI monitoring strategy must be adaptive.

LayerX allows for granular policy creation. You might allow the marketing team to use GenAI for content generation, but block them from uploading customer lists. You might permit the engineering team to use specific, enterprise-licensed AI coding assistants but block all access to public, free-tier models.

This adaptive approach ensures that security enables business goals rather than hindering them. It aligns with the “Zero-trust browser isolation” philosophy: trust no interaction implicitly, verify every data transfer, and enforce least-privilege access dynamically.

Integrating AI for Network Security and Monitoring

While the browser is the new perimeter, it must coexist with existing infrastructure. AI for network security and monitoring is evolving to ingest telemetry from the browser. LayerX integrates with SIEM and SOAR platforms, feeding them high-fidelity data that network sensors miss.

This integration creates a unified security posture. The browser extension handles the “last mile” of user interaction, while network tools monitor for anomalies in traffic volume or connection patterns. Together, they form a multi-layered defense that is far more effective than either component in isolation.

For example, if LayerX detects a user repeatedly attempting to bypass DLP controls to upload files to a shadow AI site, it can trigger a high-severity alert in the SOC. This alert is rich with context, user identity, application name, file type, and specific data contents, allowing analysts to respond with precision.

What is Next for Browser Detection & Response?

The era of trusting the browser blindly is over. As GenAI becomes deeply embedded in enterprise workflows, the browser has become the most critical control point in the security architecture. Implementing robust AI security monitoring is the only way to navigate this landscape safely.

LayerX provides the tools necessary to operationalize this strategy. By combining deep visibility, real-time governance, and adaptive enforcement, it empowers organizations to embrace GenAI without fear. It turns the browser from a liability into a secure, managed workspace.

Ultimately, effective security is about visibility. With AI governance monitoring powered by LayerX, you can see the shadow, stop the leak, and secure the future of work.