Generative AI adoption is accelerating, but legacy data protection cannot secure the nuances of LLM interactions. This guide compares the top generative AI security tools to help enterprise leaders enable innovation while maintaining strict governance over sensitive data.

What Are Generative AI Security Tools and Why They Matter

Generative AI security tools are specialized platforms designed to secure the usage of Large Language Models (LLMs) and AI applications. Unlike traditional firewalls, these generative AI security solutions analyze the context of conversational data, allowing them to detect and block the leakage of PII or intellectual property in real-time. They provide critical visibility into “Shadow AI,” ensuring that employees do not unknowingly expose enterprise assets to public models.

For modern enterprises, these platforms differ from standard security stacks by offering granular control over prompts and responses. They prevent prompt injection attacks and ensure compliance with privacy regulations without requiring a complete ban on productivity-enhancing AI tools. By deploying these defenses, organizations can safely integrate GenAI into their workflows while mitigating the risks of data exfiltration and model manipulation.

Key Generative AI Security Trends to Watch in 2026

The shift from blocking to “secure enablement” defines the 2026 landscape. Security teams are moving away from banning AI tools entirely and are instead adopting platforms that allow safe usage through real-time data redaction. This approach ensures that employees can utilize powerful AI assistants for productivity without accidentally sharing sensitive customer data or proprietary code.

“Agentic AI” defense is another emerging priority. As attackers begin to use AI agents to automate complex social engineering and vulnerability scans, enterprises are countering with defensive AI agents. These autonomous systems can detect behavioral anomalies and respond to threats faster than human analysts, creating a dynamic shield against automated attacks.

Finally, context-aware access control is becoming standard. It is no longer sufficient to grant binary access to AI models; modern generative AI security solutions now enforce “need-to-know” policies dynamically. This ensures that a marketing employee cannot coerce an internal AI model into revealing financial data, maintaining strict internal data barriers.

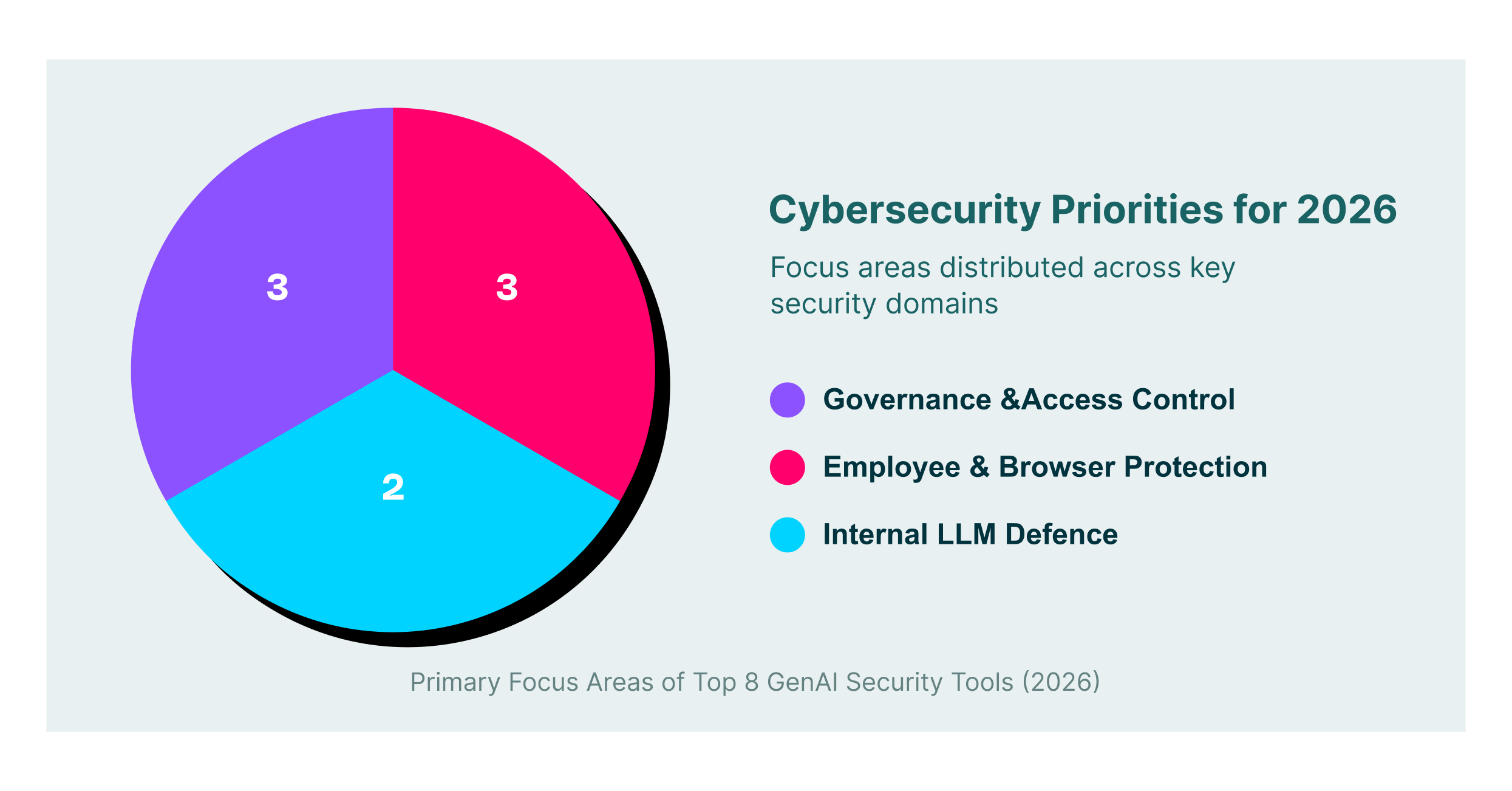

8 Best Generative AI Security Tools for 2026

Below is a comparison of the leading platforms, starting with LayerX. These vendors offer distinct approaches to securing the rapidly expanding AI attack surface.

| Platform | Key Capabilities | Best for |

| LayerX | Browser-based DLP, real-time prompt filtering, and shadow AI visibility | Enterprise browser security & DLP |

| Prompt Security | Shadow AI discovery, prompt injection protection, data redaction | Securing employee GenAI usage |

| AIM Security | GenAI risk assessment, compliance management, secure AI deployment | Microsoft 365 Copilot security |

| Lakera | Real-time threat detection, AI application security, prompt defense | Protecting internal AI applications |

| Harmonic Security | Zero-touch data protection, user nudges, risk assessment | Automated compliance monitoring |

| Lasso Security | Shadow LLM detection, context-based access control, and real-time blocking | LLM governance & oversight |

| Nightfall AI | AI-powered DLP, data redaction, browser & endpoint agents | Preventing data exfiltration |

| Knostic | Access control governance, need-to-know policy enforcement | Managing AI access permissions |

1. LayerX

LayerX is a browser security platform that operates as a lightweight extension, transforming any commercial browser into a secure, managed workspace. It delivers deep visibility into all GenAI interactions, enabling security teams to detect and block the pasting of sensitive source code or PII into tools like ChatGPT before data leaves the endpoint.

The platform goes beyond simple blocking by enabling granular governance of “Shadow AI” usage. It identifies unsanctioned AI tools and enforces policies based on user identity and risk level, ensuring that generative AI security solutions are applied at the exact point of interaction. This secures the “last mile” of data transfer, allowing safe AI adoption without disrupting user productivity.

2. Prompt Security

Prompt Security provides a comprehensive platform for securing every stage of GenAI adoption, from casual employee usage to deep integration of LLMs in internal products. Its robust discovery capabilities give IT teams a full inventory of Shadow AI tools in use, accompanied by risk scoring that helps prioritize security efforts.

The platform excels at preventing prompt injection attacks and enforcing data privacy through real-time redaction. By inspecting prompts and responses, Prompt Security ensures that confidential enterprise data is sanitized before reaching external providers, making it a reliable choice among generative AI security companies for safer chatbot deployment.

3. AIM Security

AIM Security focuses on creating a secure environment for enterprise GenAI adoption, with strong tools for risk assessment and governance. The platform helps CISOs discover and map all GenAI applications across the organization, providing a clear picture of exposure to compliance and security risks.

A major advantage of AIM Security is its ability to secure large-scale deployments like Microsoft 365 Copilot. It offers features that validate the security of AI agents and prevent data leaks through strict policy enforcement, ensuring that the operational benefits of AI do not compromise regulatory standards.

4. Lakera

Lakera distinguishes itself with “Lakera Guard,” a real-time protection layer built to defend AI applications against adversarial attacks. It maintains an extensive database of known prompt injection techniques and jailbreaks, allowing it to block malicious inputs that attempt to manipulate LLM behavior.

Beyond threat detection, Lakera offers strong data loss prevention capabilities tailored for API-based AI interactions. It is particularly effective for engineering teams building proprietary GenAI features, as it integrates directly into the development lifecycle to prevent vulnerabilities from reaching production environments.

5. Harmonic Security

Harmonic Security adopts a “zero-touch” philosophy for data protection, aiming to minimize user friction while maintaining security. Instead of rigid blocking, it uses pre-trained data protection models to identify sensitive information and “nudge” users toward safer behaviors, effectively training them during their daily workflows.

This platform reduces the operational burden on security teams by automating the classification of risky AI services. Harmonic provides clear visibility into safe versus dangerous apps, allowing organizations to confidently approve low-risk tools while automatically restricting access to those that pose a threat.

6. Lasso Security

Lasso Security delivers end-to-end defense for Large Language Models, combining shadow IT detection with advanced access control. Its “LLM Guardian” technology sits between users and models to monitor data flow, inspect content for anomalies, and enforce policies that prevent sensitive data sharing.

The platform emphasizes context-aware security, ensuring users only access data they are authorized to see, even when interacting with an AI that possesses broad organizational knowledge. Lasso also includes specific defenses against data poisoning and model theft, positioning it as a robust solution for enterprises building their own models.

7. Nightfall AI

Nightfall AI leverages its established cloud DLP technology to offer a specialized solution for Generative AI security. It uses AI-driven detectors to scan prompts and file uploads for PII, API keys, and other secrets, automatically redacting this information before it is processed by external AI vendors.

The solution deploys via browser plugins and endpoint agents to capture data at the source, preventing exfiltration through copy-paste actions. Nightfall’s focus on high-precision detection minimizes false positives, ensuring that legitimate workflows proceed smoothly while strictly enforcing data compliance.

8. Knostic

Knostic addresses the critical challenge of access control within GenAI environments, specifically the risk of users bypassing permissions through conversational interfaces. It enforces “need-to-know” policies by analyzing the context of user queries and underlying data permissions, preventing the accidental over-sharing of sensitive knowledge.

The platform uses LLMs to dynamically generate and adjust access policies, reducing the manual administrative work required for role-based access controls. Knostic provides automated compliance reporting, helping organizations demonstrate that their AI deployments adhere to strict internal and external privacy standards.

How to Choose the Best Generative AI Security Provider

- Determine if your primary goal is securing employee browser usage or protecting internal LLM applications.

- Evaluate the platform’s ability to discover “Shadow AI” without manual input to ensure full visibility.

- Prioritize generative AI security solutions that offer real-time remediation, like redaction rather than just passive logging.

- Check for seamless integration with your existing identity providers to enforce consistent user-based policies.

- Select a vendor that minimizes friction by offering educational nudges to guide user behavior.

FAQs

What are the main risks of using generative AI in the enterprise?

The primary risks include data leakage, where employees share PII or IP with public models, and “Shadow AI,” where unsanctioned tools are used without oversight. Prompt injection attacks also pose a threat, as they can manipulate LLMs into bypassing safety filters and exposing backend data.

How do GenAI security tools differ from traditional DLP?

Traditional DLP uses rigid regex patterns that often fail to understand conversational context, leading to false positives. GenAI security tools use semantic analysis to interpret the intent behind a prompt, allowing them to distinguish between legitimate work and data exfiltration attempts.

Can I just block ChatGPT to secure my organization?

Blocking ChatGPT often results in “Shadow IT,” where employees switch to personal devices or unmonitored tools to continue their work. A more effective strategy is to use security platforms that enable safe usage by redacting sensitive data while keeping the productivity benefits accessible.

Do I need a browser extension for GenAI security?

A browser extension is often the most effective method for securing employee usage because it sees “last-mile” actions like copy-pasting and file uploads. Network proxies may miss encrypted traffic or fail to see the context visible only within the browser session.

What is “Shadow AI” and why is it dangerous?

Shadow AI refers to the use of AI tools by employees without IT department approval or knowledge. It is dangerous because these tools lack enterprise security controls, meaning any data shared with them leaves the organization’s protective perimeter and could be used to train public models.