Opera Aria, the AI-powered assistant integrated into Opera’s AI browser platform, represents a significant expansion in how modern users interact with web content. As browsing assistants and AI browsing capabilities become increasingly prevalent across enterprise environments, understanding the specific threats posed by Opera Aria security and Opera Aria risks has become essential for security teams evaluating these technologies.

This comprehensive analysis examines Opera Aria from three critical dimensions: its security model, integration design, and user experience framework. The goal is to provide security leaders with a clear understanding of Opera Aria vulnerabilities, AI browser agents, and the emerging threat patterns that distinguish AI browsing risks from traditional web security challenges.

Evaluating Opera Aria’s Security Architecture

Opera Aria operates as an integrated AI assistant within Opera’s Chromium-based browser, combining multiple LLM providers, including Google Gemini and OpenAI’s ChatGPT. Unlike some competing AI browsers, Opera positions the platform as privacy-conscious by implementing conversation isolation and encrypted storage. However, this approach operates within a fundamentally constrained security environment where AI-specific vulnerabilities compound traditional browser risks.

The Security Model

Opera enforces conversation isolation, preventing Aria from accessing browsing history or previous chat sessions directly. Conversations are encrypted and retained on Opera’s servers for approximately 30 days before deletion. While this encryption approach is methodologically sound, it doesn’t address the core vulnerability inherent in AI browser agents: the AI processes all webpage content without distinguishing legitimate information from injected malicious instructions. This architectural limitation creates the foundation for AI browsing risks that operate outside traditional browser security controls.

Integration Design and Data Flow

The architecture employs a hybrid model combining local processing with cloud-based LLM inference. Unlike some alternatives, this design minimizes unnecessary data transmission, though it introduces critical dependencies on third-party AI providers. When users interact with Opera Aria, data flows to OpenAI and Google infrastructure for processing. Both providers retain anonymized conversation portions according to their respective compliance periods, creating data residency patterns that enterprises must account for in their risk assessments.

The Tab Commands feature attempts to reduce privacy exposure by processing command instructions server-side rather than transmitting full tab metadata. However, this selective processing doesn’t eliminate the fundamental vulnerability: any webpage content visible to the user becomes accessible to the AI engine, creating potential exposure for sensitive information regardless of transmission optimization.

User Experience as an Attack Surface

Opera removed the authentication requirement for Aria to reduce friction and enable immediate access to AI capabilities. This design decision prioritizes usability over security friction, inadvertently expanding the attack surface. Without authentication friction, users may be less cautious about the data they input into the interface, and malicious actors find it easier to abuse the system through social engineering or indirect attacks. The free, always-on nature of Opera Aria means traditional authentication and authorization controls are significantly weakened compared to enterprise AI tools requiring formal access management.

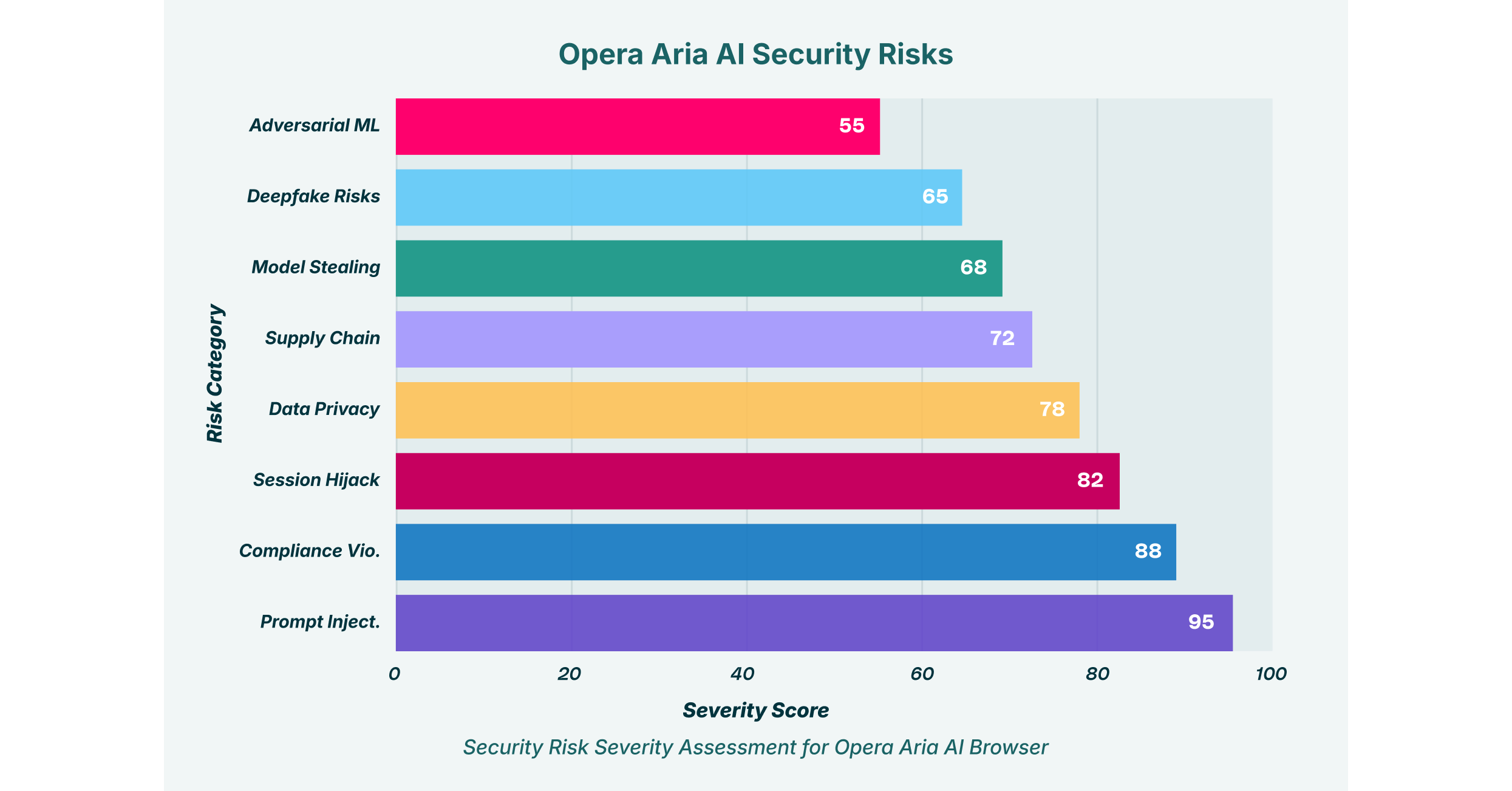

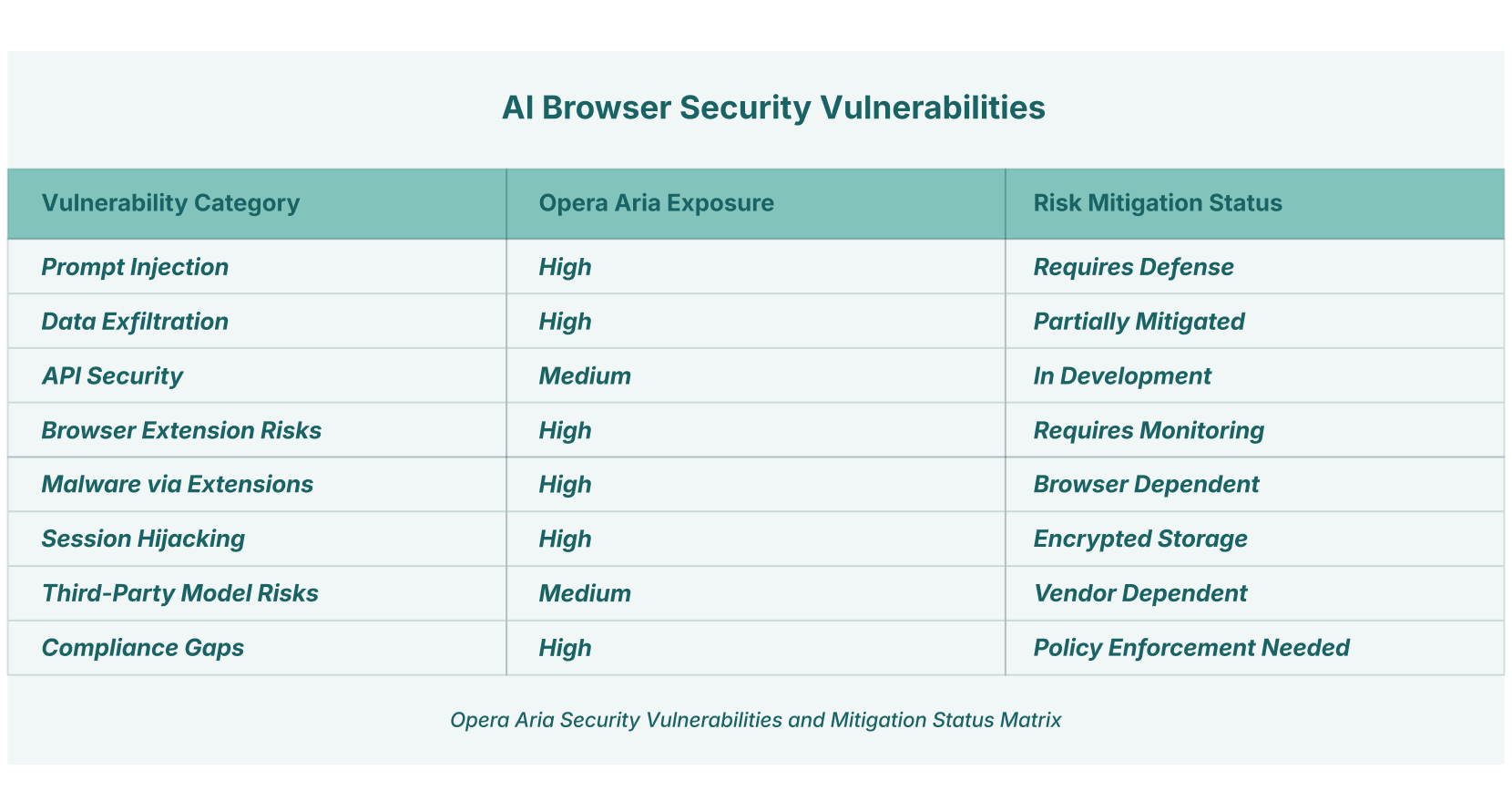

AI browsers introduce fundamentally new attack vectors that conventional browser security measures cannot address. The vulnerabilities affecting Opera Aria extend far beyond phishing or malware; they encompass AI-specific threats that exploit the unique architectural properties of AI browser agents. Below is a detailed examination of specific Opera Aria risks and AI browsing vulnerabilities.

1. Prompt Injection and Indirect Command Execution

Prompt injection represents the most critical vulnerability affecting AI browser technology across all platforms, including Opera Aria. Unlike traditional web attacks, prompt injection occurs when malicious instructions are embedded within webpage content, hidden from human view but visible to the AI. When users request Aria to summarize a webpage, the AI processes all textual content without distinguishing legitimate article text from attacker-controlled commands.

Attackers hide injection prompts using multiple techniques: white text on white backgrounds, HTML comments within page source code, innocuous-appearing comments on social platforms, or data attributes invisible to human readers. Once processed, these injections can instruct the AI to extract email contents, copy login credentials, capture authentication tokens, or exfiltrate sensitive files to attacker-controlled infrastructure.

The vulnerability is particularly insidious because execution happens automatically following a simple user request. Users requesting document summarization or information extraction remain unaware that parallel malicious actions are occurring within their browsing session. Research by Brave Browser demonstrated this vulnerability in competing AI browser agents, showing how attackers can craft URLs containing encoded malicious instructions that execute when users share links or access content through AI browsing workflows.

2. Data Exfiltration Through AI Context Windows

The AI’s context window functions as its short-term memory, processing all information visible on a webpage. Users frequently interact with sensitive data during browsing sessions: financial account details, passwords stored in forms, proprietary business information, customer records, and healthcare data. When Aria processes pages containing such information, that data enters the LLM’s processing pipeline regardless of whether the user explicitly requests it.

Even without active injection attacks, users requesting analysis or summarization may inadvertently cause the AI to reveal sensitive information in its responses. A user asking Aria to “summarize this email thread” while viewing employee contact information or salary discussions might cause the AI to include that information in its summary output, which could then be logged, analyzed, or retained according to the LLM provider’s data retention policies.

For financial services firms handling payment card data or customer account information, this creates compliance violations under PCI-DSS and GLBA when users summarize pages containing customer data. Healthcare professionals summarizing patient portals risk exposing protected health information under HIPAA. The risk compounds across AI browsing scenarios where users expect privacy but receive none from the underlying AI processing model.

3. Browser Extension Exploitation and DOM Manipulation

The “Man-in-the-Prompt” exploit, identified by security researchers studying AI browsers, demonstrates how any browser extension with DOM access can compromise Opera Aria users. Extensions can read, inject into, and modify prompts without special authorization. This creates a vulnerability path where a compromised extension becomes what researchers term a “hacking copilot,” injecting prompts to steal data, manipulate AI responses, and cover its tracks by deleting conversation history.

Industry data shows that 99% of enterprise users have browser extensions installed, with 53% having extensions with high-risk permission scopes. Research identifies approximately 5.6% of AI-enabled browser extensions as malicious. This statistic alone suggests that widespread Opera Aria deployment will inevitably result in extension-based compromise incidents. The vulnerability becomes particularly acute when extensions request permissions to access web content or modify pages, capabilities that also grant them access to modify Aria’s interaction layer.

4. Session Hijacking and Authentication Token Theft

Aria conversations, while encrypted in transit, remain vulnerable to session hijacking attacks. An attacker intercepting session tokens can impersonate users, accessing their chat history, cached credentials, and any authenticated integrations the user has linked to Aria. The consequences escalate when users have connected Aria sessions to other applications through APIs or single sign-on mechanisms.

Session hijacking succeeds through multiple vectors: tokens extracted from browser local storage or session storage, stolen through malicious extensions that monitor memory access patterns, or captured during transmission across unsecured networks or compromised WiFi environments. Once an attacker obtains a valid session token, they gain full access to the user’s interaction history and can manipulate future queries and responses. An attacker with session control can extract sensitive information cached in the conversation history, then delete the conversation to cover their tracks.

5. Supply Chain Vulnerabilities in Third-Party Models

Opera’s reliance on externally trained models from Google and OpenAI introduces supply chain risks inherent to AI browser agents. Data poisoning at the model level, where attackers contaminate training datasets or model weights through supply chain compromise, can cause Aria to generate biased, inaccurate, or malicious outputs. The Hugging Face incident in 2024 demonstrated how a supply chain compromise propagates across thousands of dependent systems, causing widespread impact from a single point of failure.

If OpenAI or Google’s infrastructure suffers a backdoor insertion, model poisoning attack, or unauthorized model modification, every Opera Aria user becomes exposed. Attackers can embed triggers within the model that activate under specific conditions, causing the AI to leak information, refuse legitimate requests, or execute hidden commands that compromise user systems or data. Unlike traditional software vulnerabilities with discrete patches, model-level compromise could remain undetected for extended periods, affecting users across all AI browsing platforms that depend on the compromised model.

6. API Attack Surface and Model Extraction

Opera’s Aria integration with multiple LLM provider APIs creates additional attack opportunities. API scraping, automated submission of thousands of queries to extract model behavior patterns, allows attackers to reverse-engineer the underlying model logic and identify decision boundaries. Query-based attacks can map how the model responds to various inputs, identifying edge cases and vulnerabilities.

More critically, if users integrate Opera Aria with enterprise APIs or custom LLM endpoints, those integrations become attack vectors. Misconfigured APIs lack proper authentication, rate limiting, and behavioral analysis, enabling unauthorized access to sensitive model outputs or underlying infrastructure. Attackers can craft API requests that bypass intended restrictions or extract information that should be restricted based on user permissions.

7. Adversarial Machine Learning and Model Manipulation

Attackers can craft adversarial inputs specifically designed to manipulate AI behavior. These prompts are engineered to bypass safety controls, trick the model into revealing information it should protect, or produce consistently biased outputs that favor attacker objectives. Adversarial attacks succeed because LLMs struggle to distinguish between legitimate edge cases and malicious manipulation attempts.

For Opera Aria, adversarial attacks could cause the AI to misclassify phishing sites as legitimate, ignore security warnings, prioritize attacker-provided information over user instructions, or execute instructions embedded within seemingly innocuous content. An attacker training adversarial prompts could create inputs that consistently trigger specific vulnerabilities in Aria’s decision logic, enabling repeatable exploitation of the same vulnerability across different user sessions.

8. Deepfake-Assisted Social Engineering and Identity Spoofing

While Opera Aria doesn’t generate deepfakes natively, the browser’s AI capabilities can be weaponized within deepfake-assisted attacks. An attacker might combine a deepfake video call with prompt-injected Aria commands to create a multi-layered social engineering scenario. The AI could be manipulated into confirming fake information, encouraging credential disclosure, or approving unauthorized transactions.

The convergence of AI-generated media and AI browser agents creates unprecedented social manipulation opportunities. Victims interact simultaneously with synthetic media and an apparently trustworthy AI assistant, both controlled or influenced by the attacker. An executive receiving a deepfake call from their CEO asking them to approve a transaction might consult Aria for verification, only to have a compromised instance provide falsified confirmation.

9. Privacy Leakage from Unsecured Data Retention

Opera stores conversation history on its servers for 30 days. During this retention period, data remains vulnerable to database breach, unauthorized access by insiders, or exploitation of zero-day vulnerabilities in Opera’s infrastructure. If Opera’s database is compromised, potentially years of user conversations could be exposed, depending on backup retention policies.

Additionally, anonymization techniques employed by OpenAI and Google, while protecting direct user identity, don’t prevent inference attacks where sufficiently detailed metadata reveals sensitive information. Even anonymized conversation data containing enough context can enable reconstruction of sensitive information or identification of specific individuals. Organizations handling GDPR-protected data or HIPAA-regulated information cannot rely on anonymization as sufficient protection for AI browsing activities. The GDPR’s requirements for data protection fundamentally conflict with using AI platforms that process data through external providers.

10. Compliance and Regulatory Non-Compliance Risks

Opera Aria creates immediate compliance challenges for regulated organizations. Users pasting customer records into Opera Aria for summarization or analysis violate GDPR data residency requirements and Article 32 data processing security obligations. Healthcare providers using Aria on patient portals without explicit Business Associate Agreements violate HIPAA’s business associate requirements and technical safeguard standards.

Financial services using Aria for customer data analysis without proper audit trails violate PCI-DSS requirements and SEC Rule 17a-4, which mandate immutable audit logs for all customer data access. The fundamental issue: Aria processes data through external AI services without providing organizations visibility into how, where, and for how long that data is retained. This opacity makes demonstrating compliance nearly impossible.

11. Insecure AI-Generated Code and Configuration Artifacts

When users ask Aria to generate code, scripts, or configuration instructions, the AI could introduce vulnerabilities either naturally or through attacker manipulation. An attacker injecting prompts could cause Aria to generate deliberately weakened code, suggest unsafe configurations, or produce scripts containing backdoors. Users copying this generated code into production systems inadvertently deploy attacker-controlled logic.

The risk extends beyond malicious injection. Even uncompromised Aria instances may generate code with subtle vulnerabilities, off-by-one errors in loops, missing input validation, hardcoded credentials, or insufficient cryptographic implementations. These vulnerabilities propagate into production systems where they become attack vectors for subsequent compromise.

12. Model Stealing Through API Analysis and Behavior Inference

Researchers can analyze Opera Aria responses to infer characteristics of the underlying LLMs. By submitting carefully crafted queries and analyzing response patterns, competitors or attackers can extract knowledge about model training data, behavior parameters, and decision logic. This information theft undermines Opera’s competitive differentiation and creates opportunities for attackers to develop targeted exploits against the underlying models.

AI browser agents create fundamentally new attack scenarios unavailable against traditional browsers, combining multiple attack vectors into sophisticated multi-stage campaigns. Consider this realistic scenario: A security analyst using Opera Aria to research phishing techniques visits a malicious website. The site contains hidden prompt injection code instructing Aria to navigate to the analyst’s email interface, extract subject lines and sender addresses from recent messages, and send them to an attacker-controlled server.

Simultaneously, the attacker had injected a compromised browser extension into the user’s system weeks prior. This extension monitors for the exfiltrated email data, captures it, and forwards it to a command-and-control server. The user notices nothing unusual; their Opera Aria sessions appear normal, conversation history displays correctly, and no error messages appear. Only weeks later, when the attacker uses the stolen email data to launch targeted phishing campaigns against the analyst’s colleagues, does the compromise become apparent.

This attack succeeds because it exploits the inherent architectural gap in AI browsing platforms: the AI cannot distinguish between user intent and injected instructions embedded in webpage content. The attack is also difficult to detect because it leaves no obviously malicious traces in browser history, cache, or event logs.

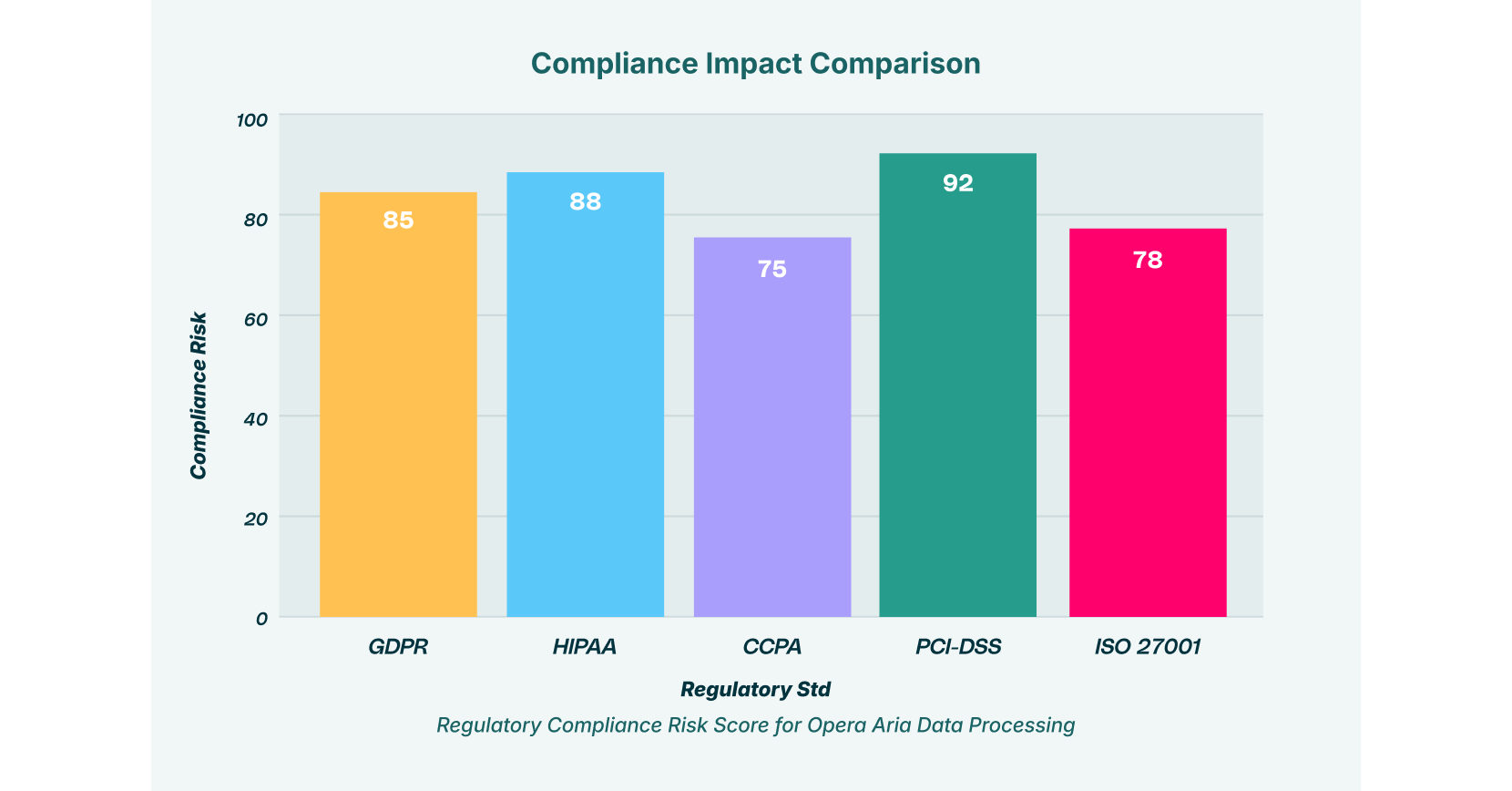

Regulatory and Compliance Framework Impact

Organizations deploying Opera Aria security measures must account for significant regulatory complexity. PCI-DSS compliance becomes particularly challenging when payment data flows through third-party AI models without explicit consent or audit trails. HIPAA violations emerge immediately if healthcare organizations allow AI analysis of patient data without proper Business Associate Agreements and technical safeguards.

GDPR compliance presents additional challenges. The regulation requires explicit user consent before data processing through third parties, data residency in EU member states, and documented data processing agreements. Opera Aria processes user data through servers outside Europe under the control of third-party providers, creating residency violations. CCPA compliance similarly requires disclosure of data sharing with third parties, creating transparency obligations that AI browsers cannot currently meet.

Mitigating Opera Aria Risks: Enterprise Implementation Strategies

For organizations evaluating Opera Aria or similar AI browsers, comprehensive risk mitigation requires controls operating at multiple layers. Relying solely on the browser’s built-in security is insufficient, given the architectural limitations of current AI browser agents.

Browser-Layer Enforcement Controls

Deploy browser-layer security enforcement that monitors all AI interactions and prevents submission of sensitive data to AI browser agents. LayerX’s Browser Detection and Response platform enforces data loss prevention directly at the browser application layer, preventing credential submission, document upload, or sensitive text input before they reach Aria. This approach operates transparently without requiring user intervention, enabling security policy enforcement while maintaining user productivity.

Defining AI Usage Governance

Establish clear organizational policies distinguishing between approved and unapproved AI browsing use cases. Users should never submit personally identifiable information, protected health information, payment card data, intellectual property, or proprietary code to any AI browser, regardless of privacy claims or encryption promises. Organizations should maintain centralized registries of approved AI browser use cases, with regular audits to ensure compliance.

Malicious Extension Detection and Prevention

Eliminate unnecessary browser extensions, and conduct comprehensive security audits of remaining extensions. Monitor for suspicious extension behavior, particularly around DOM manipulation, API calls to external servers, or attempts to access sensitive browser storage. Browser extension risk assessment should consider the extension’s source, installation type, download count, and historical security incidents.

Session Authentication Hardening

Implement multi-factor authentication for accounts linked to Opera Aria or other AI browser agents. Restrict AI assistant access to non-sensitive applications and services only. Use conditional access policies to prevent access to AI browsers from untrusted devices or networks.

Browser Sandboxing and Session Isolation

Use browser sandboxing features to compartmentalize Aria sessions from general browsing, limiting exposure if session tokens are compromised. This approach ensures that compromise of one browsing context doesn’t automatically grant access to all user sessions.

Zero-Trust Architecture for AI Interactions

Treat all AI browser outputs as untrusted input requiring validation before acting on recommendations or following instructions. When Aria suggests navigating to a URL, executing a command, or approving a transaction, verify through independent channels before compliance. This approach acknowledges that AI browser agents and AI browsing platforms can be compromised or manipulated without the user’s awareness.

The Persistent Nature of AI Browser Security Challenges

Security researchers and AI safety experts have reached consensus on one critical point: prompt injection vulnerabilities cannot be permanently eliminated under current AI architecture. As long as systems process untrusted data and can influence actions based on that data, attack vectors persist. This architectural reality means organizations must treat AI browsing risks and AI browser agents as managed risks requiring continuous monitoring and control, rather than eliminated risks.

This doesn’t mean organizations should abandon AI browsers entirely. Rather, it requires deploying these technologies with realistic security expectations, comprehensive policy frameworks, and defense-in-depth controls. The productivity gains from integrated browsing assistants and AI browsing capabilities are genuine, but they must be balanced against the specific threat landscape these technologies introduce.

Secure Adoption of Opera Aria in Enterprise Environments

Opera Aria security presents a complex risk-reward calculation for enterprises. The productivity gains from integrated AI assistance are substantial, but Opera Aria vulnerabilities introduce threats across data confidentiality, integrity, and availability dimensions. The emergence of AI browsers and AI browser agents represents a permanent shift in how modern users interact with web content and sensitive systems.

Organizations should evaluate Opera Aria within a comprehensive security framework that acknowledges both the capabilities and AI browsing vulnerabilities of these platforms. Deployment should follow the principle of least privilege, restricting Opera Aria access to non-sensitive use cases while maintaining rigorous controls over what data users can submit and how user sessions are managed.

The evolution of browsing assistants creates new efficiency opportunities, but also introduces new attack surfaces requiring new defensive approaches. The question for security teams isn’t whether to adopt AI browser technology; competitive pressures and user demand will drive adoption regardless. The question is whether to adopt it safely, with appropriate security governance aligned to organizational risk tolerance and regulatory obligations.

For security teams evaluating AI browser deployment, LayerX’s Browser Detection and Response platform provides specialized visibility and enforcement capabilities necessary to govern Opera Aria and other AI browser agents safely. By implementing browser-layer security enforcement, organizations can harness the productivity benefits of integrated AI assistance while containing the security and compliance risks inherent to AI browsing technologies. The platform’s lightweight design ensures that users can continue working productively while the underlying security infrastructure protects against data loss, credential theft, extension-based compromise, and unsafe AI browsing patterns.

Learn more about protecting your organization against AI browser risks by visiting LayerX’s resources on GenAI security and browser protection strategies.