The rapid integration of Generative AI into daily workflows has created a significant visibility gap for enterprise security teams. Employees are no longer waiting for IT approval to adopt new tools. They are actively seeking out browser extensions and web-based chatbots to increase their productivity. This decentralized adoption has rendered traditional perimeter defenses largely ineffective. You cannot secure what you cannot see. To regain control over this expanding ecosystem, organizations must initiate a comprehensive AI audit.

This process is not merely a compliance exercise. It is a critical operational requirement for 2025. The goal is to uncover the specific applications and data flows that currently exist in your environment. By identifying unauthorized usage, often referred to as “Shadow AI,” you can begin to quantify your actual risk exposure. An effective audit transitions your security posture from reactive firefighting to proactive governance. It allows you to understand not just which tools are present, but how they are being used and what corporate data is feeding them.

The Hidden Scope of Shadow AI

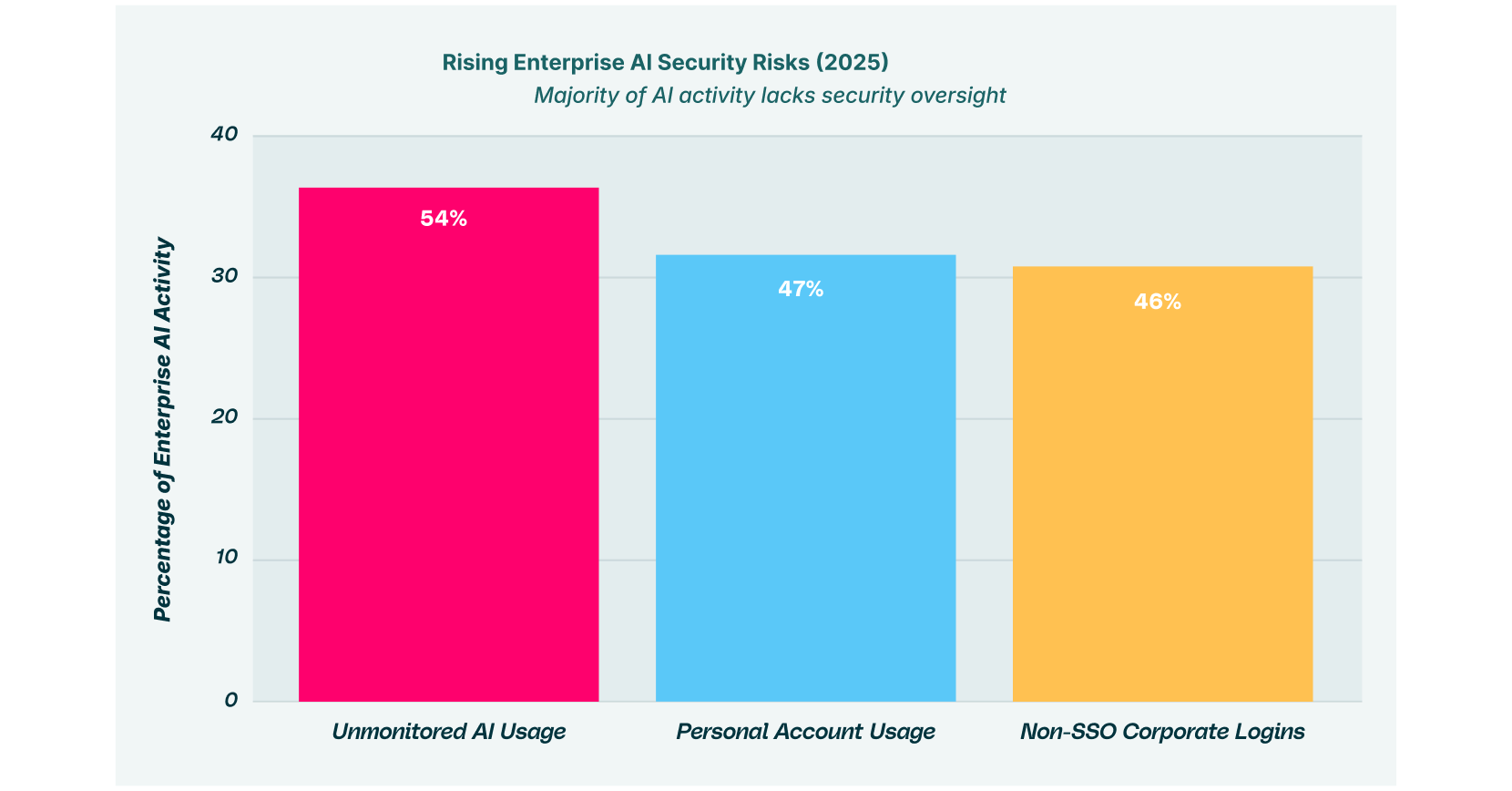

As illustrated in Figure 1 above, the scale of unmonitored activity is often shocking to security leaders. When 89% of AI usage occurs outside of corporate oversight, standard security logs fail to provide an accurate picture of risk. Employees frequently use personal email accounts to log into these services, bypassing Single Sign-On (SSO) protocols that would normally trigger an audit trail. This creates a fragmented identity landscape where corporate data lives in accounts that IT cannot access or deprovision.

An AI audit serves as the primary mechanism to illuminate this invisible activity. It digs deeper than network-level traffic analysis, which often struggles to decrypt the contents of modern web sessions. Instead, a browser-centric audit looks at the actual rendering of web pages and the execution of extensions. This level of detail is necessary to distinguish between a harmless visit to a tech blog and an active session where proprietary code is being pasted into a chatbot for optimization.

This visibility is crucial for mapping your “attack surface.” Every unmonitored browser extension is a potential entry point for supply chain attacks. Every personal GenAI account is a potential silo for data leakage. By bringing these shadow assets into the light, you take the first definitive step toward a secure enterprise browser environment.

Constructing Your AI Audit Framework

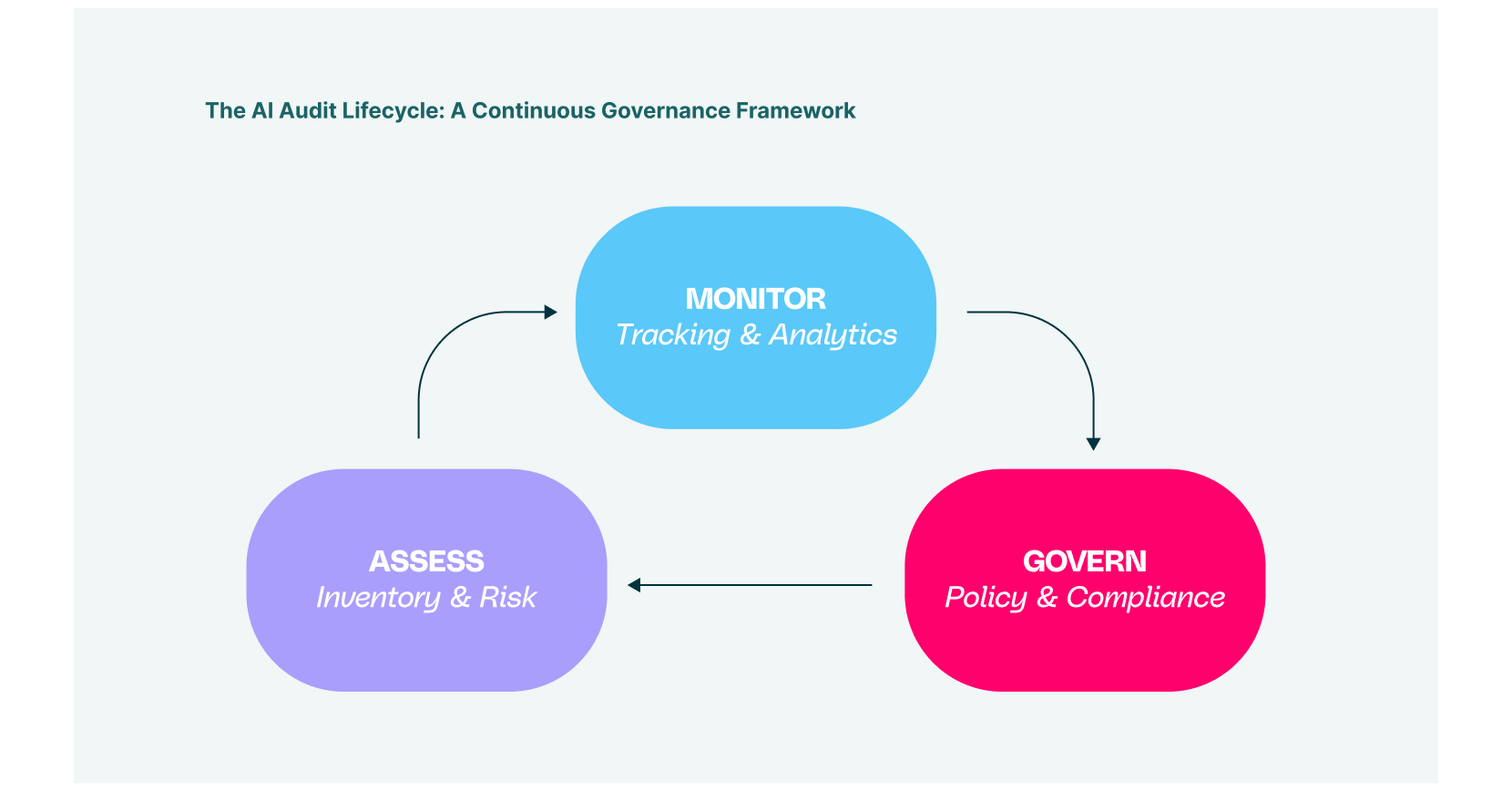

Establishing a structured approach is essential for success. A sporadic or ad-hoc review will only yield partial results. Security leaders should instead adopt a cyclical AI audit framework that treats security as a continuous process rather than a one-time event. This framework must account for the fluid nature of the GenAI market, where new models with novel capabilities are released weekly.

The framework is built upon three core pillars: Assessment, Monitoring, and Governance. These phases are interconnected. Your assessment informs your monitoring strategy, which in turn generates the data needed for effective governance. Unlike static software assets, AI models evolve based on their inputs and training data. Therefore, your AI audit framework must be agile enough to adapt to these shifts without requiring a complete rewrite of your policies every quarter.

Phase 1: Assessment and Asset Discovery

The lifecycle begins with a rigorous inventory process. The initial phase of your AI security audit must focus on total discovery. This goes far beyond simply listing the vendors you currently pay for. You must identify every digital touchpoint where corporate data interacts with an algorithmic model. In the modern enterprise, this interaction predominantly happens within the web browser, making the browser the most critical sensor in your security stack.

Security teams need to deploy tools capable of inspecting browser traffic to identify API calls to AI services. This includes major platforms like OpenAI and Anthropic, but also the “long tail” of thousands of niche AI productivity tools. A thorough AI security audit will often reveal hundreds of unapproved applications, ranging from grammar checkers to automated meeting notetakers, that have quietly installed themselves into the corporate workflow.

It is also vital to categorize these assets by their risk profile. Does the tool claim ownership of the input data? Does it share data with third parties for training purposes? Your inventory must differentiate between “Enterprise” instances that offer data privacy guarantees and “Consumer” versions that do not. This distinction is often the difference between a secure workflow and a compliance violation.

Phase 2: The AI/ML Security Audit Deep Dive

Once you have established a clear inventory, the focus shifts to the models themselves. While most enterprises consume AI via SaaS, those developing internal tools face a unique set of challenges. An AI/ML security audit is required here to evaluate the integrity of the model and its training pipeline. This is where you scrutinize the “ingredients” of your internal AI projects.

This specific type of audit examines the provenance of your data and the security of your libraries. Are you using open-source components with known vulnerabilities? Is the training data utilized in your models free from poisoning attacks? In an AI/ML security audit, you are looking for weaknesses that an adversary could exploit to manipulate the model’s output or infer sensitive training data through inversion attacks.

For example, imagine a scenario where an internal financial model is trained on unsanitized public data. If that data contains hidden malicious patterns, the model could be tricked into making erroneous predictions. By stress-testing your proprietary models against these adversarial techniques, you ensure that your internal innovations do not become liabilities. This level of scrutiny is essential for organizations deploying customer-facing AI agents.

Tackling Data Leakage Risks

Perhaps the most pressing concern for CISOs today is data exfiltration. It is remarkably easy for an employee to innocently paste a sensitive customer list into a chatbot to “format it nicely.” This simple action constitutes a data breach. An AI data security audit focuses specifically on these data flows to prevent irreversible loss.

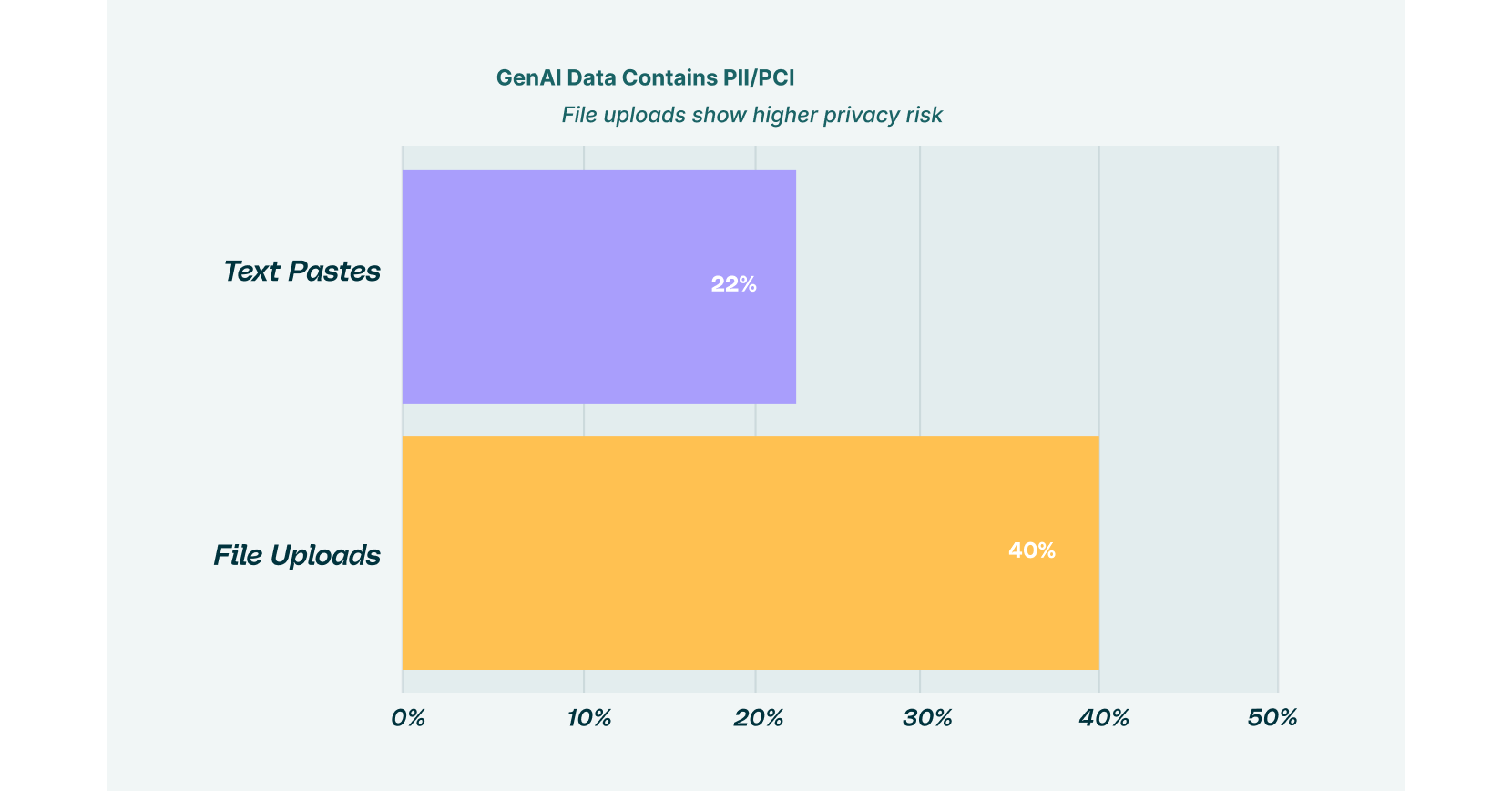

The browser acts as the gateway for this data. Text pastes, file uploads, and form submissions are the primary mechanisms of exfiltration. Your audit needs to categorize these interactions with precision. It is not sufficient to know that a user visited a GenAI site; you must know what they sent to it and why.

Conducting an AI Data Security Audit

As Figure 3 demonstrates, file uploads are particularly dangerous. Documents often contain unstructured data, financial projections, legal strategy, and PII, which is harder to filter than simple text strings. A rigorous AI data security audit will implement DLP (Data Loss Prevention) rules that scan these uploads in real-time. This ensures that files matching sensitive patterns are blocked before they ever leave the browser.

You must also consider the context of the data. A developer pasting code into a secure, enterprise-sanctioned AI tool might be acceptable. That same developer pasting the same code into a public, free-tier chatbot is a high-risk event. Your AI data security audit should help you define these contextual boundaries. It allows you to build policies that are granular enough to stop bad behavior without blocking legitimate work.

Furthermore, this audit process should assess the risk of “prompt injection.” This occurs when malicious instructions are hidden within content that an AI processes. If your employees use AI to summarize web pages or emails, they could be vulnerable to indirect attacks that manipulate the AI into exfiltrating data. Your audit must verify that your browser security tools can detect and isolate these potential threats.

Continuous Monitoring with BDR

An audit that ends with a static PDF report is a failed audit. The findings must transition into a state of active, continuous monitoring. This is where the concept of Browser Detection & Response (BDR) becomes integral to the AI audit framework. BDR tools provide the telemetry needed to maintain the “Monitor” phase of the lifecycle, turning a snapshot in time into a live video feed of your security posture.

BDR offers visibility into the actual DOM (Document Object Model) of the web page. This allows security teams to see exactly how users interact with AI tools in real time. You can track which extensions are being installed, which forms are being filled out, and where data is traveling. This continuous stream of data ensures that your AI audit remains relevant even as user behavior shifts.

This real-time capability is crucial for identifying “drift.” Over time, approved tools may change their terms of service, or employees may drift back to using unauthorized personal accounts. Continuous monitoring detects these changes immediately. It allows you to intervene the moment a new risk emerges, rather than waiting for the next annual assessment cycle.

Governance and Policy Enforcement

The final piece of the puzzle is governance. Regulations like the EU AI Act and various data privacy laws demand strict accountability. Your AI audit framework must generate the artifacts necessary to prove compliance. This involves documenting not just what tools are used, but how decisions are made regarding their approval and usage.

If an AI/ML security audit reveals a high-risk model, the governance log should show the remediation steps taken. Did you decommission the model? Did you implement additional guardrails? Automated reporting is vital here. Security teams cannot afford to spend weeks manually compiling spreadsheets for auditors. Dashboard-driven reporting that pulls directly from browser telemetry ensures that your evidence is always ready and accurate.

This governance layer also enables “coaching” policies. Instead of a binary “allow” or “block” approach, which often drives users toward Shadow IT, you can implement real-time user education. If a user attempts a risky action, the browser can intervene with a pop-up explaining the risk and suggesting a safe alternative. This respects the user’s intent to be productive while enforcing the necessary security boundaries.

How to Integrate AI Into Your Enterprise?

The integration of AI into the enterprise is inevitable. The organizations that thrive in this new era will be those that can safely harness its power without compromising their data. A dedicated AI audit is the foundational step in this journey. It clears the fog of Shadow AI, identifies the cracks where data might leak, and establishes a rhythm of continuous improvement.

By adopting a browser-first AI audit framework, you place security exactly where the work happens. You gain the visibility to assess risks accurately, the power to monitor interactions in real-time, and the wisdom to govern with nuance. Start your assessment today. Inventory your assets, analyze your data flows, and secure the browser. The future of your enterprise intelligence depends on it.