Security operations centers face a different reality in 2026 than they did just a few years ago. The image of a human hacker manually typing code is largely outdated. Today, autonomous software agents execute complex campaigns without direct human oversight. An AI-powered cyber attack is not a future prediction. It is the dominant operational standard for enterprise defense teams.

Attack economics have shifted fundamentally. Generative AI reduced the barrier to entry so drastically that sophisticated social engineering and code injection are now available to anyone with a dark web LLM subscription. This accessibility flooded the corporate perimeter. We now see high-volume and high-fidelity attacks that legacy secure web gateways (SWGs) fail to parse.

The Escalation of AI Threats in 2026

Attacks are constructed differently now. Traditional malware relied on static signatures that defenders could catalog and block. In contrast, a modern AI cyber attack is polymorphic. It rewrites its own code to evade detection. It adapts social engineering scripts in real-time based on a target’s LinkedIn profile or recent email threads.

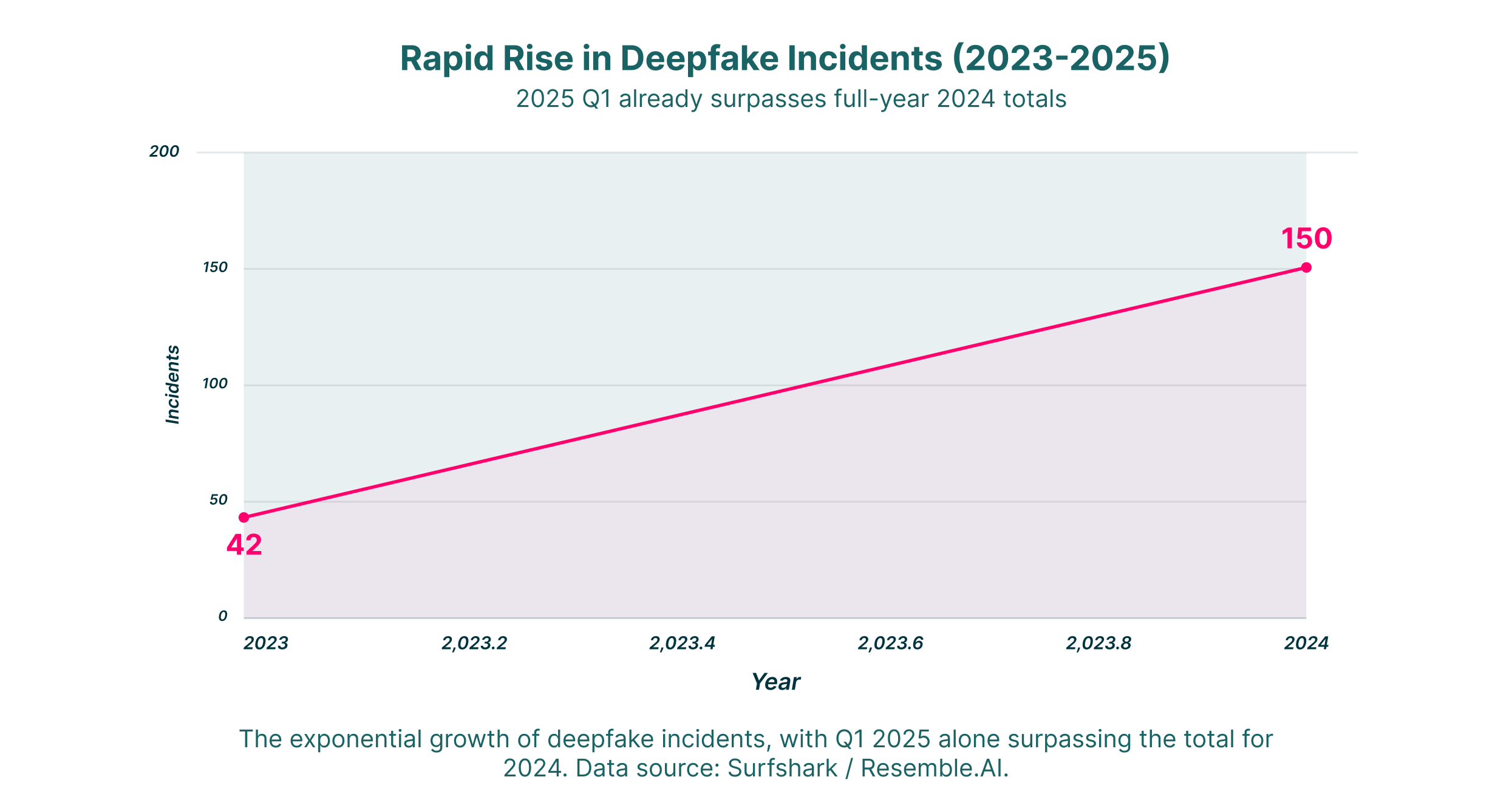

This adaptability creates a significant blind spot for standard defenses. When an intelligent agent generates an attack, it leaves no recognizable fingerprint until execution. Data from early 2025 painted a stark picture of this escalation. Synthetic media and identity theft numbers are particularly alarming.

Visualizing the Surge

These incidents did not grow linearly. Accessible tools for voice cloning and video synthesis became widespread. Consequently, the frequency of attacks utilizing these technologies spiked.

The trajectory of deepfake incidents reveals a disturbing trend in the modern threat environment. As the graph illustrates, reported incidents exploded. Q1 2025 alone recorded 179 incidents, which surpassed the entire total of the previous year. This vertical ascent highlights how accessible Generative AI tools have become for malicious actors. An AI-powered cyber attack no longer requires nation-state resources. It only needs a subscription to a synthetic media tool. This data confirms that AI cyber attack examples involving voice cloning and video fabrication are moving from theoretical risks to daily operational realities for security teams.

The Era of the Agentic AI Cyber Attack

The most dangerous development in the current security environment is the agentic AI cyber attack. Unlike passive tools that wait for a human operator, agentic AI operates with specific goals and the autonomy to achieve them.

Consider a scenario where a malicious agent receives a task: “Obtain administrative credentials for the Salesforce environment.” This AI agent scans public repositories for email patterns. It identifies key personnel. It drafts contextually perfect phishing emails. It even engages in real-time chat conversations with helpdesk support to reset passwords. It operates 24/7. It tests thousands of vectors simultaneously until one succeeds.

This automation allows for “big game hunting” at scale. Human attackers previously chose between high-volume spray-and-pray attacks or high-effort spear phishing. Cyber attack AI systems allow criminals to launch millions of hyper-personalized attacks simultaneously.

Common AI Cyber Attack Examples

Defenders must dissect these threats to stop them. The most prevalent vectors we track in 2026 include:

- Deepfake Executive Impersonation: Attackers use GenAI to clone the voice or video likeness of a C-level executive. These fakes authorize urgent wire transfers in video calls. The fidelity is high enough to fool employees who have worked with the executive for years.

- Polymorphic Malware Injection: Code that constantly changes structure to bypass antivirus signatures. AI generates unique file hashes for every single download. This renders hash-based detection useless.

- Automated Vulnerability Discovery: AI scanners ingest thousands of lines of open-source code used by an enterprise instead of manually searching for bugs. They identify zero-day vulnerabilities and write exploit scripts before developers can patch them.

- LLM Jailbreaking & Poisoning: Attackers feed malicious prompts to internal enterprise chatbots. They trick the bots into revealing proprietary data or sensitive PII. This technique is known as “prompt injection.”

Social Engineering and the Precision of AI

The human element remains the most targeted part of the attack surface. However, identifying a phishing email by poor grammar is no longer a valid strategy. A sophisticated AI cyber security attack creates communications that remain indistinguishable from legitimate business correspondence.

These systems analyze the tone, vocabulary, and sentence structure of a compromised email account. They mimic it perfectly. If an employee typically starts emails with “Hey team,” and signs off with “Best,” the AI replicates that pattern. This level of mimicry destroys the standard “trust your gut” advice often given in security training.

The Effectiveness Gap

The impact of this increased fidelity is measurable. We compared human-drafted phishing attempts against those generated by autonomous systems. The difference in employee susceptibility is alarming.

Traditional security awareness training often relies on identifying grammatical errors or awkward phrasing. This data shows why those methods are obsolete. In controlled studies, an agentic AI cyber attack achieved a staggering 54% click-through rate. The AI autonomously crafted and adapted the message. This is nearly five times higher than human-written attempts. The precision that cyber attack AI systems generate allows for hyper-personalization at scale. These emails are virtually indistinguishable from legitimate correspondence. This efficiency gap suggests that attackers using AI cybersecurity attack tools can compromise organizations with a fraction of the effort previously required.

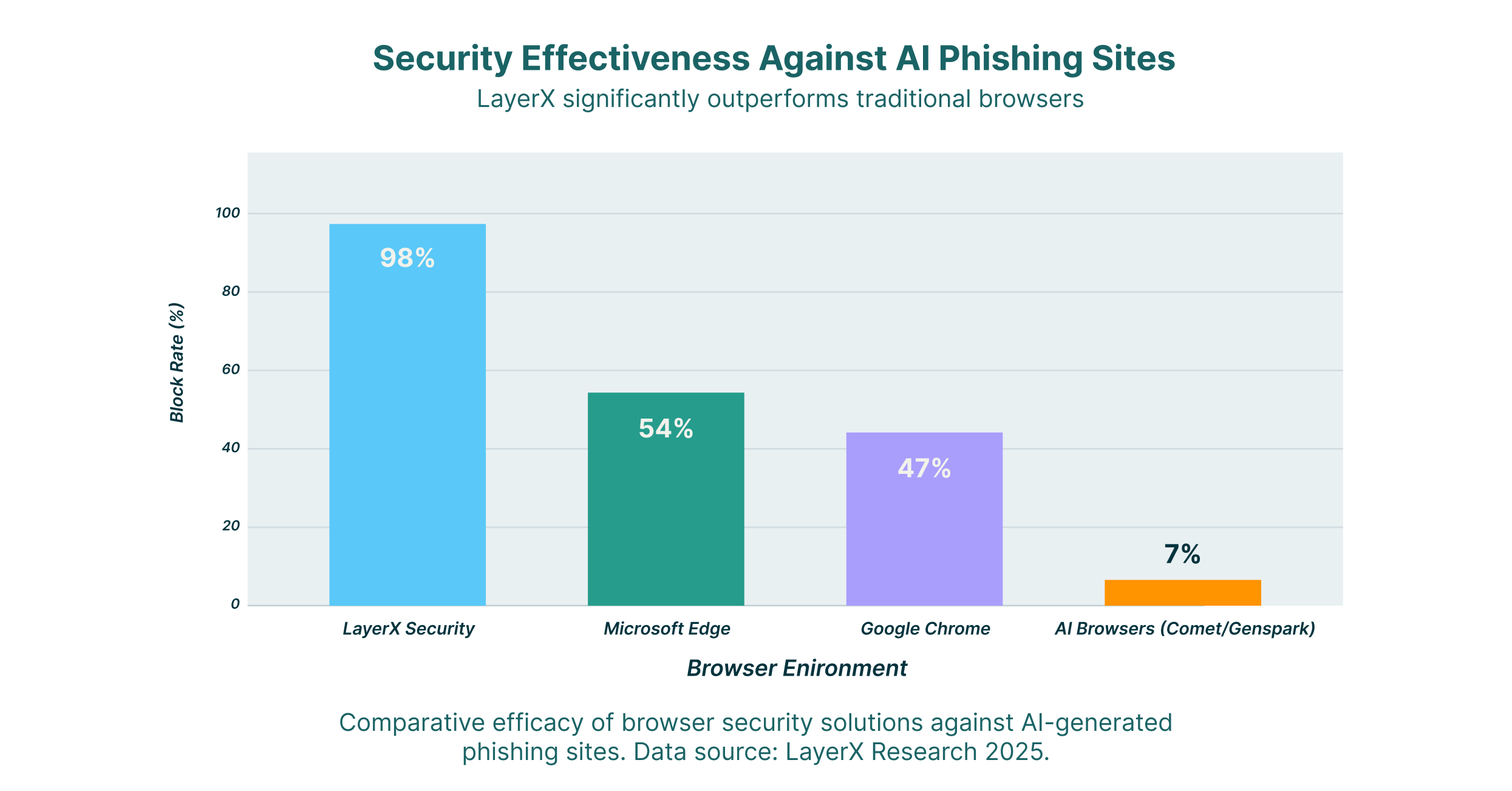

Why Legacy Browsers Cannot Stop an AI Cyber Attack

The browser is the primary interface for modern work. Yet it remains the most neglected component of the security stack. Traditional browsers like Chrome and Edge were designed for a web that no longer exists. They were built for static pages and predictable threats.

In the face of an AI-powered cyber attack, the browser often becomes the point of failure. Phishing sites generated by AI can spin up, harvest credentials, and disappear in milliseconds. This happens faster than a URL filtering database can update. Furthermore, the growth of “Shadow SaaS” means employees constantly paste sensitive data into unapproved GenAI tools. This creates a massive exfiltration risk that standard firewalls cannot see or stop.

A Comparative Failure

Standard browsers fail to detect intent-based threats. This is a critical liability. We compared the efficacy of different browser environments against the latest wave of AI-generated phishing sites.

This comparison exposes a critical vulnerability in the enterprise browser ecosystem. Standard browsers like Edge and Chrome stop roughly half of phishing attempts. However, they struggle to detect the sophisticated obfuscation techniques used in a modern AI cyber attack. Most alarmingly, “AI Browsers” that prioritize agentic features over security blocked fewer than 10% of threats. In contrast, LayerX’s Secure Enterprise Browser capabilities demonstrated a 98% block rate. This disparity underscores that defending against a cyber attack AI launches requires a dedicated security layer. This layer must analyze intent in real-time rather than relying on static blocklists that GenAI easily bypasses.

The Mechanics of an AI Cyber Security Attack

Understanding the operational phases of these attacks reveals why they are difficult to interdict. A standard AI cybersecurity attack follows a lifecycle that moves faster than human response teams can manage.

1. Reconnaissance and Profiling

The AI scrapes social media, corporate directories, and news releases. It builds a knowledge graph of the target organization. It identifies relationships, such as reporting lines, and vendors, such as which SaaS platforms the company uses.

2. Weaponization and Delivery

The cyber attack AI uses gathered data to draft highly specific lures. If the target uses Salesforce, the AI generates a clone of the Salesforce login page hosted on a legitimate-looking domain. It then sends an email that references a real, recent project mentioned on the target’s LinkedIn.

3. Execution and Exfiltration

The attack executes once the user engages. Credential theft happens instantly. For malware, the AI might use “drive-by download” techniques. These execute code in the browser’s memory without ever touching the disk. This bypasses endpoint detection (EDR) agents.

Traditional vs. AI-Powered Vectors

We can look at the differences in methodology to better understand the shift:

| Feature | Traditional Cyber Attack | AI-Powered Cyber Attack |

| Scale | Linear (One attacker, one target) | Exponential (One agent, millions of targets) |

| Personalization | Low (Generic templates) | High (Context-aware content) |

| Adaptability | Static (Pre-written code) | Dynamic (Polymorphic code rewriting) |

| Speed | Hours to Days | Milliseconds to Seconds |

| Barrier to Entry | High Technical Skill | Low (Prompt engineering) |

Defending the Browser-to-Cloud Attack Surface

The only way to effectively counter an agentic AI cyber attack is to deploy equally intelligent defenses. These defenses must integrate directly into the workspace. This is where Browser Detection & Response (BDR) becomes the essential control.

LayerX provides this capability by placing a sensor directly within the browser session. Unlike a network proxy that sees only encrypted traffic, LayerX analyzes the webpage’s behavior as it renders. It can detect the subtle anomalies of an AI-generated site that human eyes and network tools miss. Examples include inconsistent code structures or invisible overlay elements.

Neutralizing Shadow SaaS

A major component of the AI threat involves employees unknowingly exposing data. When a user pastes proprietary code into ChatGPT or a similar tool to “debug” it, they effectively leak IP. LayerX enforces granular policies. These prevent the pasting of sensitive data into unauthorized GenAI apps while still allowing the tool to be used for benign tasks. This capability neutralizes the risk of internal AI cyber attack examples, where the threat is negligence rather than malice.

Strategic Response for Security Leaders

CISOs and security architects operating in 2026 must shift strategy. The focus must move from “prevention at the perimeter” to “protection at the point of interaction.”

- Implement Browser-Level Visibility: You cannot stop what you cannot see. Gaining telemetry into browser events is critical for identifying the early signs of an AI-powered cyber attack.

- Adopt Intent-Based Detection: Move away from reputation-based blocking. A domain purchased five minutes ago has no reputation. However, its behavior reveals its intent. Requesting credentials or analyzing mouse movements are clear indicators.

- Isolate High-Risk Sessions: Use Zero Trust Browser Isolation to ensure safety. Even if a user clicks a malicious link, the code executes in a disposable container rather than on the endpoint.

- Continuous Verification: Identity is the new perimeter. Continuous authentication checks analyze user behavior. They can detect when a session has been hijacked by a bot or when a user is being coerced by a deepfake.

The AI cybersecurity attack represents a permanent change in the threat environment. The tools available to attackers are powerful, accessible, and evolving daily. However, enterprises can reassert control by securing the browser. This is the primary gateway for these attacks. LayerX’s BDR platform turns the browser from the organization’s biggest vulnerability into its most effective line of defense. This ensures that the business can continue uninterrupted regardless of the sophistication of the threat.