The rapid integration of Artificial Intelligence into enterprise environments has introduced a complex variable into the security equation. Organizations are racing to adopt GenAI to accelerate development and operations. This rush often overlooks the critical infrastructure that connects these models to sensitive data.

The Application Programming Interface, or API, serves as the bridge between AI tools and corporate assets. This connection creates a precarious gap where api security for AI tools becomes the defining challenge for modern security teams. Traditional models struggle to contain the non-deterministic nature of AI models.

Standard applications follow predictable traffic patterns. AI tools do not. They generate novel API requests and access data in unforeseen ways. They can even hallucinate commands that bypass established logic checks. This article examines the specific risks associated with AI workflow integrations. It also outlines how a Browser Detection and Response (BDR) strategy provides the necessary visibility to secure this new frontier.

The Intersection of AI Agents and API Security

In 2025, the distinction between a user and software is blurring. AI agents are autonomous software capable of executing multi-step tasks. They now act as highly privileged users within enterprise networks.

These agents rely heavily on APIs to retrieve context and execute actions. They also store results via these same channels. However, the security protocols governing these interactions often lag behind the capabilities of the agents themselves.

An employee might employ an AI assistant to summarize sales emails. The agent triggers a series of API calls to the email provider and the CRM. Each call represents a potential vector for exploitation if the api security controls are not context-aware.

The Open Worldwide Application Security Project (OWASP) has identified specific risks here. They note that agents with broad API permissions can be manipulated to perform unauthorized actions. This is often referred to as “Excessive Agency.”

The Problem of “Shadow AI” Connections

Shadow SaaS plagued the early cloud era. Shadow AI is now a primary concern for the same reasons. Employees frequently connect approved corporate APIs to unapproved third-party AI tools to expedite tasks.

This creates a hidden integration layer. Sensitive corporate data flows into public AI models without oversight. LayerX’s research into Shadow SaaS ecosystems highlights that a significant percentage of these connections occur directly through the browser. This bypasses network firewalls entirely.

Unmasking the Risks: Why Traditional Gateways Fail

The volume of API traffic generated by AI tools is growing exponentially. The number of associated vulnerabilities is rising at a similar pace. Security teams can no longer rely on rate limiting and basic authentication to protect their assets.

The nature of api security for AI tools requires a shift in perspective. Defenders must move from volume-based monitoring to behavioral analysis. A simple, valid token is no longer proof of authorized intent.

Vulnerability Growth Trends

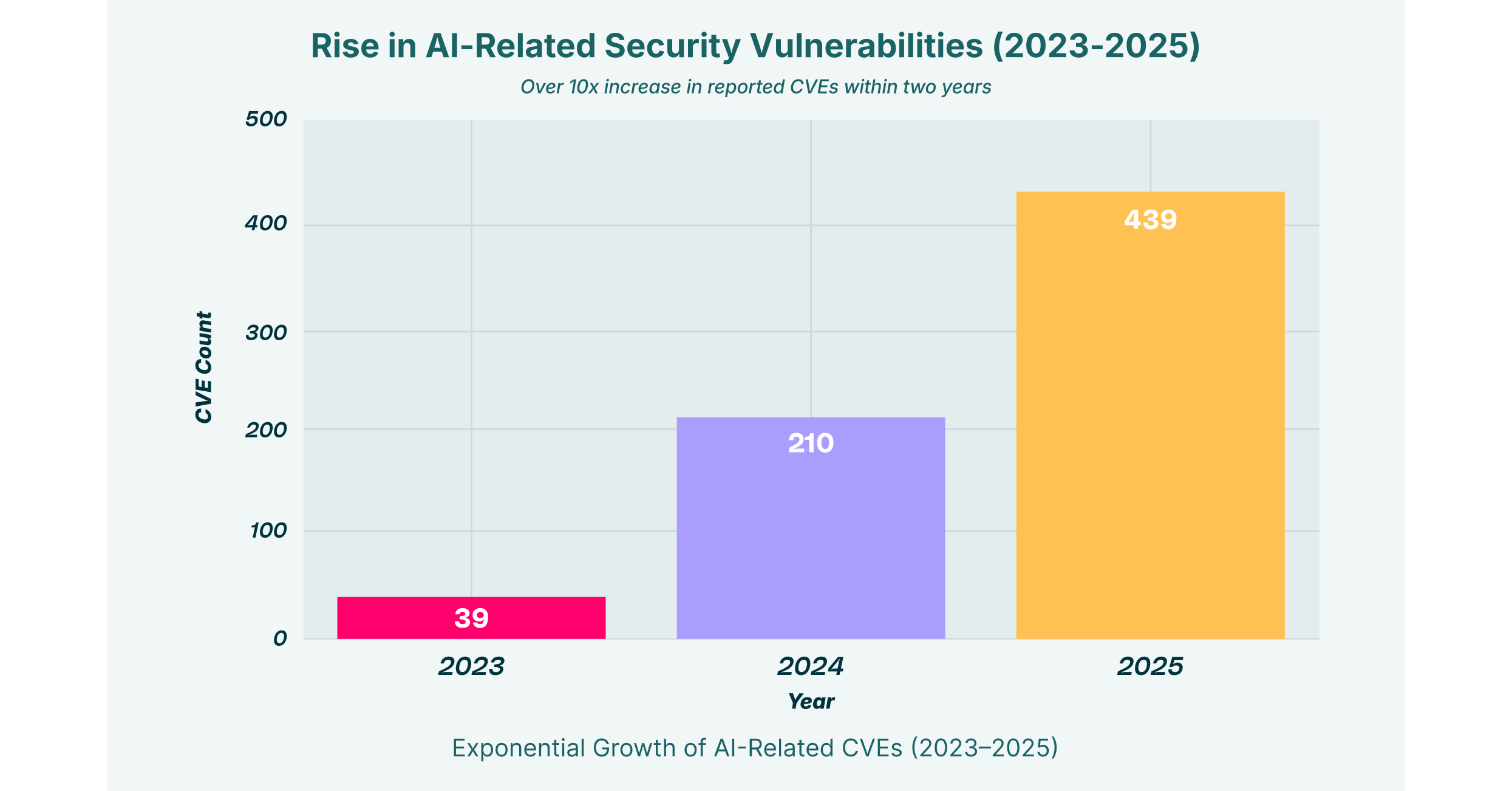

The explosion of AI adoption has correlated with a sharp rise in API-related Common Vulnerabilities and Exposures (CVEs). Attackers are actively fuzzing AI APIs. They are looking for logic gaps where AI security tools fail to distinguish between a legitimate query and a malicious injection attempt.

The attack surface is expanding rapidly, as illustrated above. Several primary drivers are contributing to this trend.

- Prompt Injection leading to API Manipulation: Attackers craft inputs that trick AI models into generating malicious API calls.

- Business Logic Abuse: AI agents lack human intuition. They can be coerced into executing API sequences that are technically valid but operationally harmful.

- Data Exfiltration: Malicious actors use AI workflow automation to siphon data through legitimate API channels.

Critical API Vulnerabilities in the AI Era

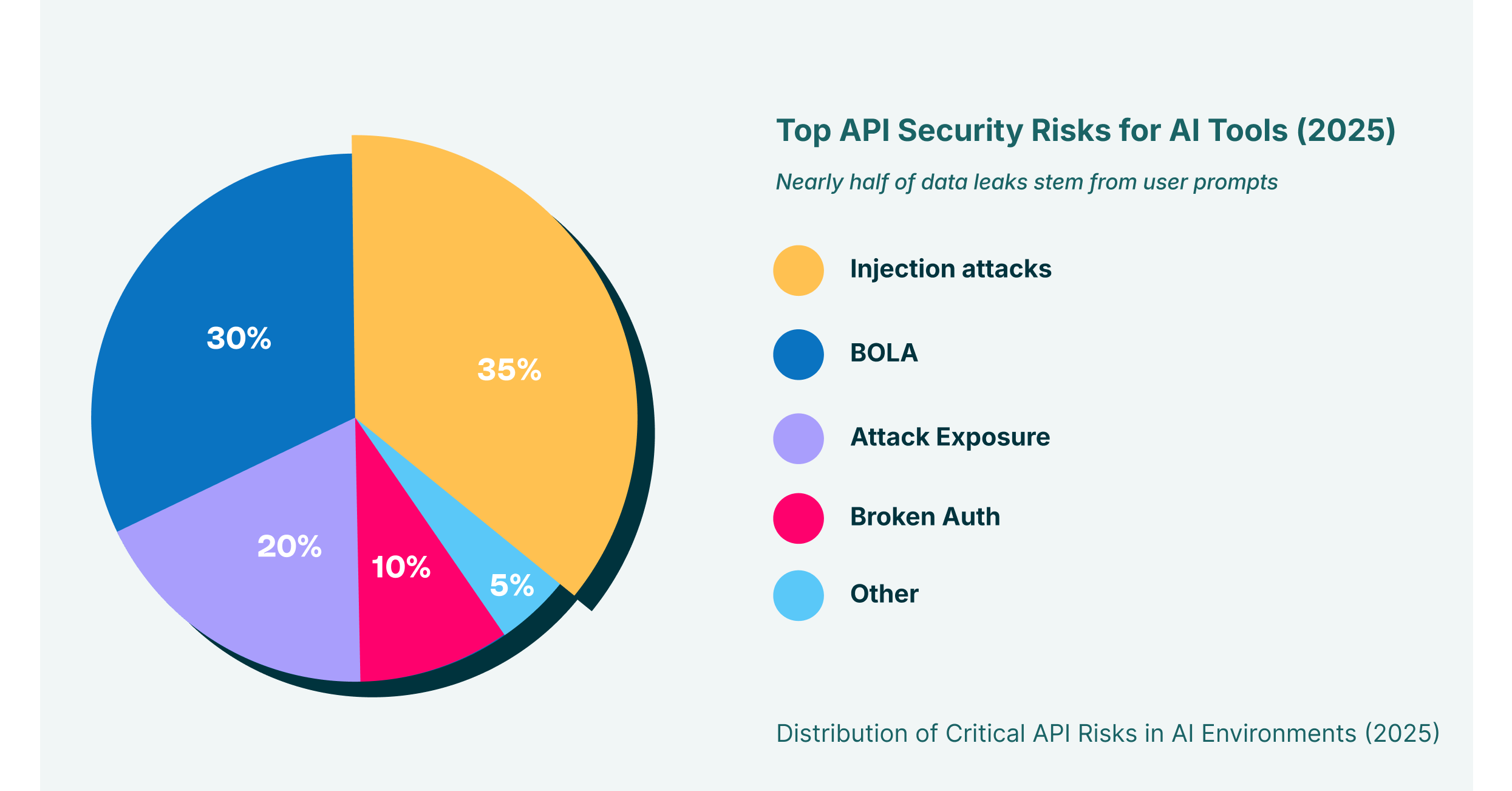

To effectively secure api security for AI tools, organizations must understand specific attack vectors. Unlike traditional web applications, AI-driven API attacks often focus on logic and authorization. SQL injection is no longer the primary concern.

Injection Attacks and BOLA

Injection attacks in this context differ from traditional methods. The “injection” is often natural language passed to an AI. The model then interprets it as a command.

If the AI has API access, this prompt injection becomes an API exploit. This is often coupled with Broken Object Level Authorization (BOLA). BOLA occurs when an API fails to verify if the user has permission to access a specific object.

The risk becomes catastrophic when combined. An AI agent might legally access the API. However, it might illegally retrieve data belonging to a different tenant or user.

GenAI-Powered Exfiltration

One of the most insidious risks is GenAI-powered exfiltration. An insider or compromised account uses an AI tool to reformat sensitive data. They summarize it before extracting it.

The data is transformed by the AI. Traditional Data Loss Prevention (DLP) regex patterns often fail to recognize it. This emphasizes the need for security controls that operate at the point of interaction.

Strategic Defense: Browser Detection & Response (BDR)

Attempting to secure api security for AI tools solely at the network level is insufficient. Much of the interaction happens client-side within the browser. The browser is the workspace where employees interact with SaaS platforms and AI consoles.

LayerX’s approach to Browser Detection and Response (BDR) places the control point directly where the user initiates the request. This captures the intent before encryption occurs.

Visibility into the AI Workflow

A BDR solution provides granular visibility into the AI workflow that network proxies miss. It analyzes the Document Object Model (DOM) and user interactions in real-time.

- Detect Malicious Extensions: Identify extensions that inject code into AI interfaces or hijack API sessions.

- Monitor Prompt Context: Analyze the context of data being pasted into AI prompts to prevent leakage.

- Validate Agent Actions: Correlate user intent with API activity to identify when an automated agent behaves anomalously.

Enforcing Zero-Trust Browser Isolation

Organizations should apply Zero-trust browser isolation principles to mitigate the risks of compromised AI sessions. This ensures that damage is contained even if an AI tool’s API key is compromised.

The browser extension acts as a last-mile enforcement point. It validates that every API call generated by the AI models aligns with corporate policy. This prevents lateral movement from a compromised AI tool to other internal applications.

Future-Proofing AI Integration

The dependency on api security will only deepen. Organizations are moving toward agentic workflows where AI tools continuously interact with enterprise data.

Security leaders must retire the idea that AI security tools are separate from general infrastructure security. The convergence is here. Protecting this environment requires a strategy that acknowledges the browser as the primary operating system.

Key Takeaways for CISOs

- Audit your API exposure: Identify which APIs are accessible to your AI tools. Apply strict least-privilege access controls.

- Deploy BDR: Implement a browser security solution to gain visibility into the “last mile” of AI workflow execution.

- Monitor for Anomaly: Shift focus from signature-based detection to behavioral analysis. This helps catch logic abuses and indirect injections.

The age of AI demands an adaptive security architecture. It must be as intelligent as the tools it seeks to protect. Enterprises can safely navigate this new era by focusing on the intersection of browser security and API governance.