Arc Max represents The Browser Company’s enterprise-focused AI browser combining advanced web navigation with embedded artificial intelligence capabilities. While Arc Max promises productivity enhancements through streamlined workflows and AI-assisted browsing, this AI browser introduces substantial Arc Max security challenges that organizations must carefully evaluate. Recent independent security auditing has identified critical Arc Max vulnerabilities that position Arc Max users at significantly higher risk compared to traditional browsing solutions.

AI browsing technologies like Arc Max function fundamentally differently from conventional browsers. These browsing assistants actively process, analyze, and interact with webpage content through integrated AI systems, creating an expanded attack surface that threat actors can systematically exploit. Understanding Arc Max risks and AI browsing vulnerabilities represents essential knowledge for security leaders evaluating these emerging technologies as part of enterprise digital infrastructure.

Arc Max: Security Architecture and Integration Design

Arc Max constructs its foundation on Chromium’s rendering engine while diverging significantly through tightly integrated AI processing and multiple third-party API connections. The AI browser implements what The Browser Company describes as a zero-data retention model with OpenAI, though the practical implications reveal concerning gaps in this security claim.

The Arc Max architecture processes user interactions through multiple processing layers: DOM parsing, AI model inference, API routing, and response caching. This design choice, while enabling powerful automation capabilities, creates multiple vulnerability vectors that malicious actors can target. The browser’s Firebase infrastructure, which previously suffered critical misconfiguration vulnerabilities, processes user customizations including CSS and JavaScript Boosts that execute with unrestricted permissions.

Arc Max security implementation demonstrates concerning architectural decisions prioritizing ease-of-deployment over defense-in-depth principles. The platform integrates both OpenAI and Anthropic Claude APIs, creating dependency relationships with external AI infrastructure beyond The Browser Company’s direct control. This distribution of critical functionality across multiple providers compounds supply chain risks and complicates incident response when breaches occur.

The integration design between Arc Max’s DOM layer and LLM processing creates inherent challenges in maintaining strict boundaries between trusted browser instructions and untrusted page content. When Arc Max operates as an agentic system executing multi-step workflows, it processes user input through layers that provide insufficient isolation to prevent malicious exploitation.

User experience in Arc Max prioritizes seamless AI interaction over friction-based security controls. The browser automatically processes webpage content, interprets user intent, and executes workflow actions with minimal user confirmation. This “low-friction” approach, while convenient, reduces security checkpoints that might catch attacks before they cause organizational harm.

Critical Arc Max Security Risks and Vulnerabilities

Firebase Configuration and Arbitrary Code Injection

The most severe Arc Max vulnerability stems from inherited architectural weaknesses in backend infrastructure. In August 2024, security researcher xyz3va discovered a critical Firebase misconfiguration (CVE-2024-45489) allowing arbitrary code injection into Arc Max instances without user awareness or consent. The vulnerability exploited insufficient access control lists on Firebase endpoints, enabling attackers to modify user customizations and inject malicious JavaScript.

The attack required attackers to obtain victim user IDs through referral links, public Boosts, or shared Easels. Once obtained, attackers could modify the creatorID field of existing Boosts and associate malicious JavaScript payloads targeting specific users. The injected code executed automatically whenever the compromised Boost’s CSS applied to visited webpages, operating with the user’s full browser privileges.

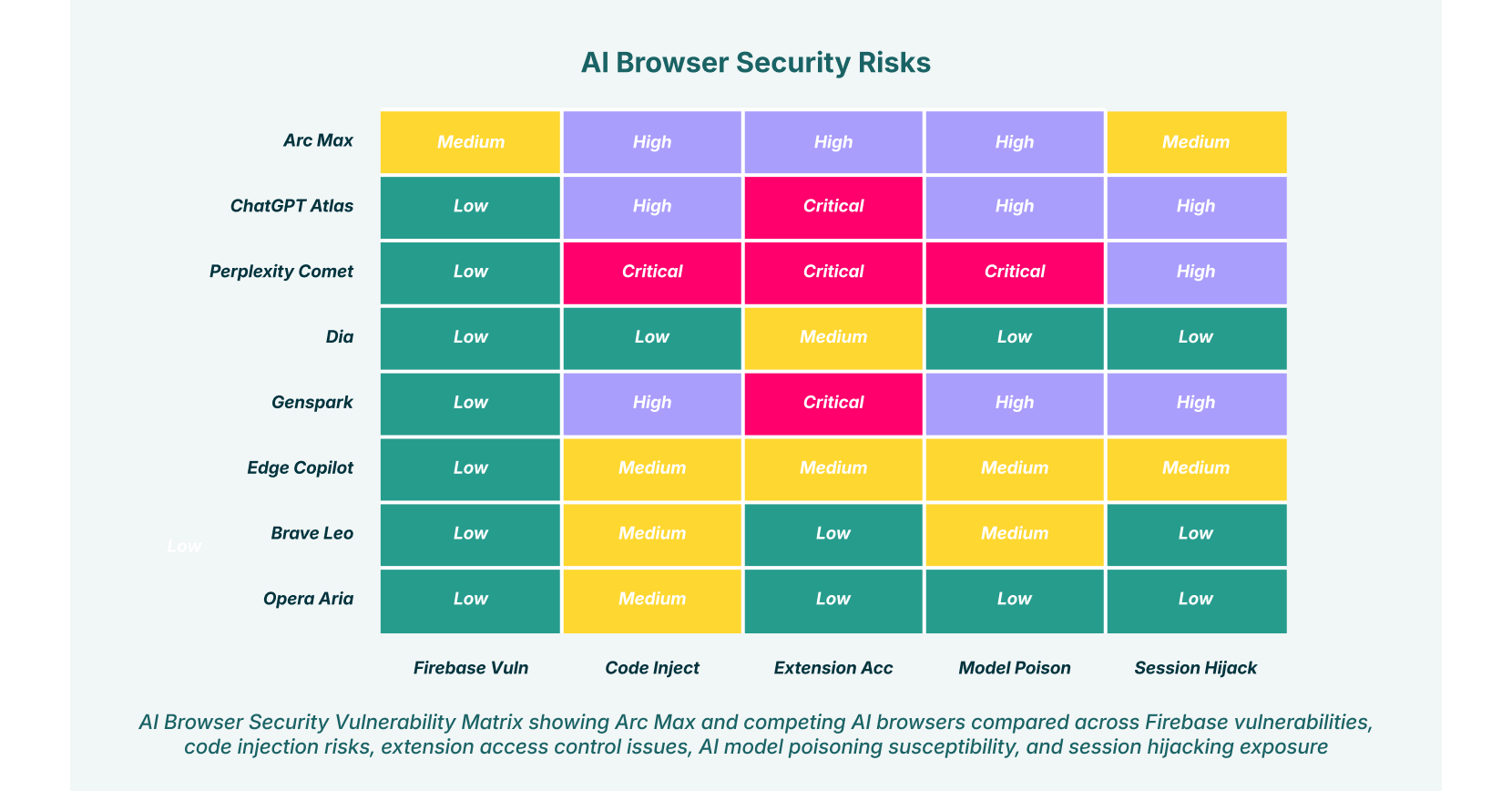

Arc Max and peer AI browsers compared across Firebase vulnerabilities, code injection risks, extension access control issues, AI model poisoning susceptibility, and session hijacking exposure

While The Browser Company addressed this specific vulnerability, the underlying architectural failure reveals fundamental flaws in Arc Max security governance. Organizations must recognize that similar misconfigurations likely persist in other backend integrations, creating ongoing exposure to Arc Max vulnerabilities of comparable severity.

Prompt Injection and Hidden Instruction Exploitation

Arc Max vulnerabilities extend to sophisticated prompt injection attacks exploiting how the AI browser processes webpage content. When users interact with Arc Max’s agentic features for summarization, content analysis, or automated tasks, the browser cannot distinguish between legitimate content and malicious instructions embedded by attackers.

These AI browsing vulnerabilities allow threat actors to hide malicious instructions within seemingly innocuous web content using invisible text (white-on-white backgrounds), HTML comments, encoded content, or social media embeds. When users ask Arc Max to summarize a compromised page or analyze content, the AI browser executes these hidden instructions with the user’s full privileges across all authenticated sessions.

The implications for AI browsing risks prove severe. A single prompt injection attack could instruct Arc Max’s Claude integration to navigate to the user’s banking website, extract saved passwords, access email or calendar data, or exfiltrate sensitive corporate information to attacker-controlled infrastructure. Unlike traditional XSS vulnerabilities affecting individual websites, prompt injection enables cross-domain access through simple natural language instructions.

Attackers can encode stolen data in base64 or other obfuscated formats to bypass detection mechanisms. Arc Max’s natural language processing capabilities, designed to be helpful and complete user requests, inadvertently make the AI more susceptible to instruction-following attacks. The AI browser’s lack of clear boundaries between trusted system instructions and user-provided content creates a fundamental security flaw that traditional security architectures cannot address.

Phishing Protection Failures

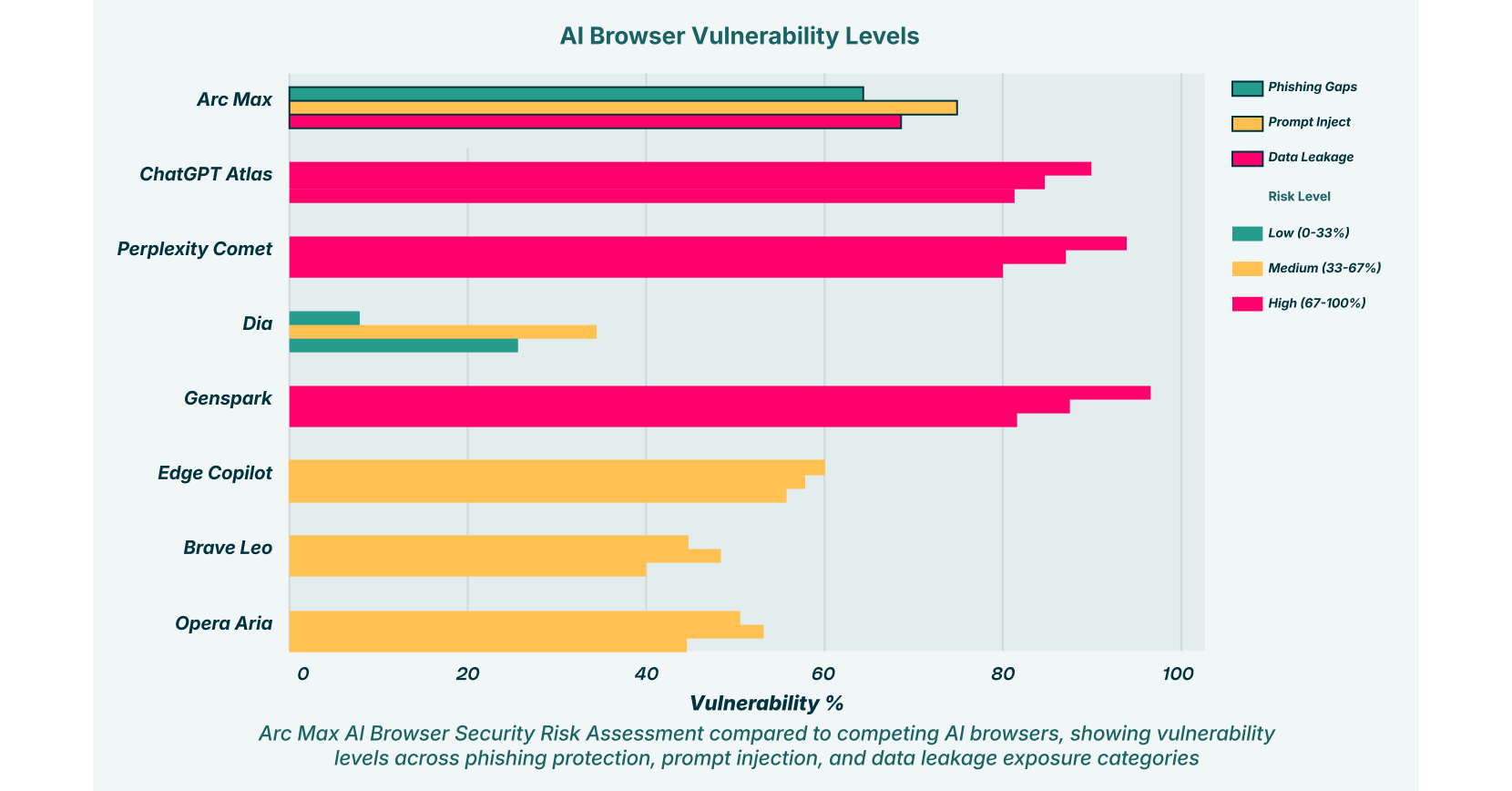

LayerX’s independent testing identified catastrophic failures in Arc Max phishing attack prevention. The AI browser blocked only 54% of known phishing websites in controlled testing against active phishing campaigns, compared to Chrome’s 47% baseline and Edge’s defensive capabilities reaching 54%. While this appears marginally better than baseline, independent verification suggests Arc Max lacks active phishing detection and relies primarily on network-level blocking when technical misconfigurations occur.

Arc Max does not implement Google’s Safe Browsing protections, which represent the industry standard security feature for Chromium-based browsers. The few pages Arc Max blocks are stopped through insecure connection pages rather than malicious site identification. This network-level blocking occurs only when technical errors arise, not from recognizing malicious intent. Attackers using properly configured HTTPS certificates can bypass even these minimal protections entirely.

This represents a fundamental Arc Max security failure exposing users to credential theft, financial fraud, and malware distribution. Organizations deploying Arc Max cannot rely on built-in phishing protection and must implement compensating controls to mitigate this Arc Max vulnerability.

Vulnerability exposure comparison showing Arc Max users face elevated risk compared to traditional browsers, with specific gaps in phishing protection and data leakage exposure

Privacy Leakage Through Session Data and Copy/Paste Operations

Arc Max processes sensitive corporate data through its AI features to enable personalization and intelligent functionality. The AI browser collects session tokens, cookies, DOM content, and copy/paste operations for AI processing, creating substantial privacy concerns regarding Arc Max security.

While Arc Max claims zero-data retention eligibility with OpenAI, the term “eligible” introduces critical ambiguity. Data may be retained during processing, maintained in intermediate caches, or preserved for compliance purposes before deletion occurs. This uncertainty makes it impossible for organizations to confidently demonstrate regulatory compliance when Arc Max processes protected information.

Copy/paste operations represent the dominant AI browsing exfiltration vector across enterprise environments. Employees copying sensitive project details, financial data, customer information, or confidential communications into Arc Max’s AI features sends this data to external infrastructure beyond organizational control. Unlike file uploads triggering data loss prevention alerts, clipboard operations often bypass traditional DLP controls since they appear as normal user activity within authenticated browser sessions.

Arc Max’s session-based architecture means that once users authenticate to corporate SaaS applications within the browser, their cookies and authentication tokens become accessible to AI processing operations. An attacker could craft prompt injection attacks instructing Arc Max’s AI to enumerate and exfiltrate data from connected Google Workspace, Microsoft 365, or Salesforce instances by leveraging the user’s existing authenticated sessions.

Browser Extension Integration and Malicious Extension Attacks

Arc Max supports browser extensions that extend functionality, creating a secondary Arc Max vulnerability surface. Research indicates that 99% of enterprise users have at least one browser extension installed, with 53% deploying more than ten extensions. Extensions operating within Arc Max can access the DOM of any rendered webpage, including AI prompt fields and session data.

This enables “Man in the Prompt” attacks where malicious extensions read and modify Arc Max’s AI prompt fields without user indication. An attacker could distribute a seemingly legitimate productivity extension that silently injects queries into Claude or GPT prompts, exfiltrating AI responses to attacker-controlled servers. Because extensions operate within the user’s trusted browser session, responses contain sensitive data the AI can access through authenticated connections.

The vulnerability’s severity compounds because browser extensions don’t require explicit permissions to access prompt fields. They operate within the DOM by default, making virtually any extension a potential attack vector for Arc Max vulnerabilities. An extension monitoring Arc Max queries could detect when users ask questions about confidential projects or customer accounts, then silently exfiltrate the AI’s responses.

Organizations implementing Arc Max without comprehensive extension governance face persistent compromise risk from both malicious and compromised legitimate extensions. LayerX’s Enterprise Browser Extension capabilities help identify and block dangerous extension behavior before it impacts organizational security.

AI Model Poisoning and Supply Chain Vulnerabilities

Arc Max depends on third-party AI models from OpenAI and Anthropic updated through cloud API channels. If either provider experiences model compromise or supply chain attack, all Arc Max instances inherit the vulnerability. An attacker controlling Claude or GPT inference endpoints could inject instructions making the AI behave unexpectedly, return fabricated information, or exfiltrate user data.

Additionally, Arc Max’s third-party service integrations create dependency risks beyond direct control. Compromised Claude or OpenAI API endpoints could return malicious responses disguised as legitimate AI output, potentially containing executable code suggestions, false security advice, or social engineering prompts designed to trick users.

The Boost system previously allowed attackers to poison user experiences with malicious JavaScript that altered page content appearance. While patched, this demonstrates how Arc Max vulnerabilities emerge from customization layers when security architecture prioritizes functionality over isolation.

Arc Max’s reliance on 169 different AI model options, including both cloud-based and on-device selections, exponentially increases the attack surface for model-related risks. Each model may contain unique vulnerabilities, and attackers need only find weaknesses in one to compromise user security. The absence of rate limiting or query pattern monitoring in Arc Max makes systematic model extraction attacks feasible.

API Security and Authentication Risks

Arc Max relies heavily on API interactions with external services, introducing Arc Max vulnerabilities related to authentication and authorization. The AI browser must store and manage credentials for numerous connected services, creating concentrated targets for credential theft. When Arc Max operates across multiple platforms, it requires access tokens, API keys, and session cookies that attackers could intercept or steal.

The browser’s approach to credential management raises critical Arc Max security questions. How are authentication tokens stored? Are API keys encrypted at rest? Does Arc Max implement secure credential storage practices that prevent unauthorized access? Available documentation provides insufficient detail about these essential security measures.

API attack vectors specific to Arc Max include token hijacking, where attackers steal authentication tokens to impersonate users across services. Session hijacking attacks could allow threat actors to take control of active Arc Max sessions, gaining access to all connected accounts and services. The persistence of these sessions across multiple tabs and connected applications amplifies potential damage from successful attacks.

Session Hijacking Through Token Exfiltration

Arc Max maintains persistent authentication sessions with SaaS applications to enable agentic capabilities. Cookies and session tokens stored in the browser’s session storage become accessible to malicious extensions, compromised AI responses, or Cross-Site Scripting (XSS) vulnerabilities in Arc Max itself.

Historical research demonstrated how threat actors prioritize stealing browser session tokens over passwords. Arc Max increases this risk by centralizing session management. A single compromised extension or XSS vulnerability could exfiltrate tokens for all authenticated services users accessed within the browser. Attackers could then impersonate users across email, cloud storage, and corporate applications, bypassing multi-factor authentication entirely since MFA typically applies to login prompts, not session hijacking.

Arc Max’s agentic features compound session hijacking risk. Once compromised, the AI agent operates with the user’s full privileges, potentially executing transactions, modifying configurations, or accessing restricted data autonomously.

Compliance and Regulatory Exposure from Data Processing

Arc Max creates compliance exposure for regulated industries. When users process HIPAA-protected healthcare data, PCI-compliant financial information, or GDPR-regulated personal data through Arc Max’s AI features, that data traverses external APIs to OpenAI and Anthropic. Even with claims of zero-data retention, the mere transmission of sensitive data outside corporate infrastructure violates many compliance frameworks’ data residency requirements.

Arc Max’s documentation states that data transmission to OpenAI is “eligible” for zero-data retention, but doesn’t guarantee retention won’t occur. Organizations in regulated industries cannot reliably demonstrate compliance if they cannot guarantee where sensitive data resides during processing. LayerX’s approach to Shadow SaaS detection helps organizations identify when sensitive data flows through uncontrolled AI services.

Adversarial Machine Learning and Evasion Attacks

Arc Max vulnerabilities extend to adversarial attacks targeting the AI models powering the browser. Attackers can craft inputs specifically designed to manipulate AI behavior, causing Arc Max to make incorrect decisions, bypass security controls, or execute malicious actions.

Evasion attacks allow malicious actors to bypass Arc Max’s content analysis by subtly altering attack payloads. Slightly modified phishing pages that would be caught by signature-based detection might slip past AI-based classification systems. Attackers can systematically discover weaknesses through iterative refinement, progressively improving evasion techniques against the AI’s defenses.

Data poisoning represents another critical AI browsing risk. If attackers influence Arc Max’s AI model training data or update processes, they could inject biases or backdoors that persist across all users. The browser’s reliance on external AI providers increases exposure to supply chain-level compromises.

Model Vulnerability and Model Stealing

Arc Max operates using multiple proprietary and third-party AI models, creating vulnerabilities related to intellectual property theft and reverse engineering. Attackers could systematically query the browser’s AI systems to extract information about model architectures, training data, or decision-making logic. This model stealing enables competitors to duplicate functionality or discover exploitable weaknesses.

Each model within Arc Max’s ecosystem may contain unique vulnerabilities. The absence of rate limiting or query pattern monitoring makes systematic model extraction attacks feasible against the AI browser.

Insecure AI-Generated Code and Slopsquatting

For developers using Arc Max’s coding features, Arc Max vulnerabilities include the generation of insecure code with hidden security flaws. AI models trained on internet repositories may suggest packages with known vulnerabilities, propose insecure coding patterns, or even hallucinate non-existent dependencies.

This “slopsquatting” attack vector exploits AI’s tendency to confidently recommend software packages that don’t exist. When developers trust Arc Max’s suggestions without verification, they introduce supply chain vulnerabilities into their applications. The AI’s authoritative tone creates false confidence, leading developers to skip security validation steps they would normally perform.

Automated Phishing and Social Engineering Through AI

Arc Max capabilities can be weaponized for automated phishing attacks against users. Attackers could use the browser’s natural language processing to generate highly personalized, contextually relevant phishing messages that adapt in real-time based on victim responses. The AI’s ability to analyze user behavior and communication patterns enables social engineering attacks of unprecedented sophistication.

Deepfake generation presents emerging AI browsing vulnerabilities. The broader ecosystem of AI browsers and generative AI tools enables attackers to create convincing audio and video deepfakes of trusted individuals. These synthetic media attacks could trick Arc Max users into divulging credentials, approving transactions, or installing malware.

Arc Max Vulnerability Comparison Table

| Risk Category | Arc Max | ChatGPT Atlas | Perplexity Comet |

| Phishing Protection | 54% blocked | 47% blocked | 7% blocked |

| Prompt Injection Risk | High | Critical | Critical |

| Firebase Configuration | Medium Risk | Low Risk | Low Risk |

| Extension Access Control | High Risk | Critical Risk | Critical Risk |

| Session Hijacking | Medium Risk | High Risk | High Risk |

Arc Max Security Vulnerability Matrix

Securing Arc Max: Mitigation Strategies

Organizations deploying Arc Max must implement comprehensive security controls to mitigate Arc Max vulnerabilities. LayerX provides enterprise-grade protection specifically designed for AI browsing environments, offering the visibility and control traditional security tools cannot deliver.

Browser-level security extensions like LayerX operate natively within Chrome, Edge, and AI browsers including Arc Max, applying consistent security policies regardless of which browser users choose. This approach ensures that AI browsing risks are managed without forcing users to abandon productivity-enhancing tools.

Key mitigation capabilities include real-time monitoring of AI agent activity, blocking risky Arc Max actions based on data sensitivity and context, detecting malicious web pages attempting to exploit embedded AI agents, and enforcing data loss prevention policies for GenAI interactions. LayerX’s AI-powered risk engine specifically addresses Arc Max vulnerabilities by analyzing behavior patterns indicating prompt injection attempts, credential theft, or data exfiltration.

Enterprises should implement strict governance over Arc Max adoption, including comprehensive risk assessments before deployment, mandatory security training for users about AI browsing vulnerabilities, continuous monitoring of AI tool usage and data flows, and incident response procedures specific to AI-related security events. Security teams must extend their browser-native visibility and DLP capabilities into AI-powered environments where data, identity, and automation converge.

For Arc Max specifically, organizations should carefully evaluate whether the browser’s security posture supports their regulatory requirements. The Firebase vulnerability history and phishing protection gaps warrant cautious deployment only after implementing substantial compensating controls.

Arc Max Security and LayerX

Arc Max security risks and vulnerabilities expose fundamental challenges in AI-enhanced browsing technology. While Arc Max promises productivity improvements through intelligent automation, it introduces attack vectors that traditional security approaches cannot address. The combination of inherited Firebase architecture vulnerabilities, susceptibility to prompt injection attacks, extensive data access permissions, and opaque AI decision-making creates severe Arc Max vulnerabilities.

The browser’s phishing protection gaps and data leakage exposure demonstrate that this technology requires substantial security enhancements before enterprise-wide deployment. Organizations must carefully evaluate Arc Max risks against business requirements, implementing comprehensive mitigation strategies if choosing to proceed.

The future of AI browsers depends on developers prioritizing security equally with feature innovation. Arc Max vulnerabilities revealed through independent testing should serve as industry lessons about the importance of defense-in-depth architecture. Only through rigorous security engineering, transparent auditing, and comprehensive threat modeling can AI browsing realize its potential without compromising user and organizational safety.

Security teams must remain vigilant as AI browsers, AI agents, and browsing assistants continue evolving. The convergence of artificial intelligence and web browsing creates both opportunities and risks that will define the next generation of enterprise security challenges.

For comprehensive protection against Arc Max vulnerabilities and other AI browsing risks, organizations should explore LayerX’s AI Browser Protection platform, which provides the visibility, control, and intelligence needed to secure AI-powered workflows across any browser, device, and identity.